K. Holt@krisholtMay 24th, 2022In this write-up: news, equipment, dall-e, imagen, google, openai, artificial intelligence

Google has demonstrated off an artificial intelligence technique that can develop photos based mostly on text input. The concept is that buyers can enter any descriptive text and the AI will flip that into an graphic. The enterprise suggests the Imagen diffusion design, produced by the Brain Staff at Google Exploration, offers “an unparalleled degree of photorealism and a deep degree of language knowing.”

This is just not the initially time we have viewed AI versions like this. OpenAI’s DALL-E (and its successor) generated headlines as nicely as pictures since of how adeptly it can convert textual content into visuals. Google’s edition, having said that, attempts to create far more sensible pictures.

To evaluate Imagen versus other text-to-impression styles (like DALL-E 2, VQ-GAN+CLIP and Latent Diffusion Products), the scientists created a benchmark referred to as DrawBench. That is a checklist of 200 textual content prompts that were being entered into each individual product. Human raters were being requested to assess each individual graphic. They “desire Imagen in excess of other products in side-by-side comparisons, both in phrases of sample quality and graphic-textual content alignment,” Google explained.

It is truly worth noting that the examples demonstrated on the Imagen internet site are curated. As this kind of, these may perhaps be the ideal of the greatest illustrations or photos that the product established. They could not correctly replicate most of the visuals that it generated.

Like DALL-E, Imagen is not obtainable to the community. Google would not imagine it can be suited as however for use by the common inhabitants for a variety of factors. For one particular thing, textual content-to-graphic types are ordinarily trained on huge datasets that are scraped from the web and are not curated, which introduces a amount of troubles.

“While this technique has enabled fast algorithmic improvements in the latest many years, datasets of this nature normally mirror social stereotypes, oppressive viewpoints, and derogatory, or usually dangerous, associations to marginalized identity teams,” the scientists wrote. “Although a subset of our coaching knowledge was filtered to taken out sounds and unwanted information, this kind of as pornographic imagery and harmful language, we also used LAION-400M dataset, which is known to contain a extensive vary of inappropriate content material together with pornographic imagery, racist slurs and harmful social stereotypes.”

As a outcome, they explained, Imagen has inherited the “social biases and constraints of substantial language designs” and could depict “unsafe stereotypes and illustration.” The staff mentioned preliminary results indicated that the AI encodes social biases, like a tendency to generate visuals of individuals with lighter skin tones and to spot them into specific stereotypical gender roles. Furthermore, the researchers take note that there is the prospective for misuse if Imagen were being made readily available to the public as is.

The group may eventually allow for the general public to enter text into a model of the product to produce their very own photographs, nevertheless. “In future operate we will discover a framework for responsible externalization that balances the price of external auditing with the hazards of unrestricted open-access,” the scientists wrote.

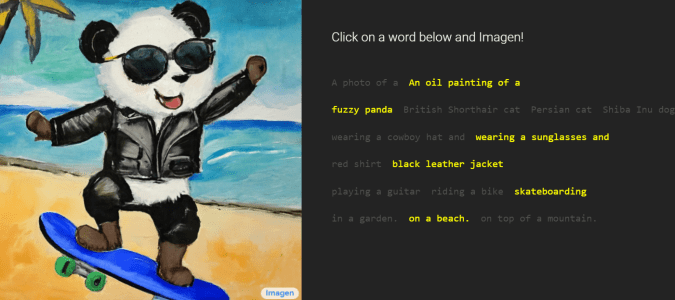

You can try Imagen on a constrained basis, though. On its web page, you can build a description employing pre-selected phrases. People can find no matter whether the impression really should be a image or an oil painting, the form of animal exhibited, the outfits they don, the action they’re endeavor and the location. So if you’ve at any time wanted to see an interpretation of an oil portray depicting a fuzzy panda sporting sunglasses and a black leather-based jacket whilst skateboarding on a seaside, this is your likelihood.

Google Investigate

All products and solutions proposed by Engadget are chosen by our editorial crew, independent of our father or mother corporation. Some of our tales include affiliate hyperlinks. If you get anything by one particular of these hyperlinks, we might receive an affiliate fee.

Some parts of this article are sourced from:

engadget.com

Get expert MBA training and Microsoft Office Pro for $60

Get expert MBA training and Microsoft Office Pro for $60