Synthetic intelligence would seem to be earning enormous developments. It has come to be the key technology guiding self-driving cars and trucks, automated translation units, speech and textual investigation, image processing and all varieties of prognosis and recognition techniques. In numerous instances, AI can surpass the finest human functionality stages at particular jobs.

We are witnessing the emergence of a new commercial field with extreme activity, huge financial expense, and huge likely. It would seem to be that there are no spots that are beyond enhancement by AI – no responsibilities that simply cannot be automated, no challenges that can not at the very least be assisted by an AI software. But is this strictly real?

Theoretical reports of computation have shown there are some matters that are not computable. Alan Turing, the good mathematician and code breaker, proved that some computations may under no circumstances complete (whilst some others would consider decades or even generations).

For illustration, we can simply compute a few moves ahead in a video game of chess, but to take a look at all the moves to the conclude of a normal 80-shift chess activity is totally impractical. Even applying just one of the world’s speediest supercomputers, working at around one hundred thousand trillion operations for each next, it would choose over a year to get just a small part of the chess area explored. This is also acknowledged as the scaling-up problem.

Early AI study usually produced superior results on tiny numbers of combos of a problem (like noughts and crosses, identified as toy challenges) but would not scale up to larger ones like chess (authentic-daily life troubles). Fortuitously, modern-day AI has designed substitute ways of dealing with such complications. These can defeat the world’s finest human players, not by searching at all possible moves forward, but by on the lookout a ton more than the human intellect can deal with. It does this by utilizing strategies involving approximations, chance estimates, large neural networks and other machine-learning strategies.

But these are really troubles of laptop or computer science, not artificial intelligence. Are there any fundamental limits on AI performing intelligently? A major issue will become clear when we consider human-pc conversation. It is broadly predicted that future AI devices will talk with and help people in friendly, entirely interactive, social exchanges.

Theory of thoughts

Of system, we currently have primitive versions of this sort of methods. But audio-command systems and connect with-centre-model script-processing just fake to be conversations. What is desired are appropriate social interactions, involving free-flowing discussions around the prolonged time period all through which AI methods don’t forget the particular person and their previous discussions. AI will have to have an understanding of intentions and beliefs and the indicating of what people are saying.

This involves what is regarded in psychology as a theory of mind – an understanding that the man or woman you are engaged with has a way of contemplating, and roughly sees the globe in the exact same way as you do. So when a person talks about their encounters, you can identify and appreciate what they explain and how it relates to by yourself, offering indicating to their comments.

We also notice the person’s steps and infer their intentions and choices from gestures and indicators. So when Sally says, “I feel that John likes Zoe but thinks that Zoe finds him unsuitable”, we know that Sally has a initially-get product of herself (her possess thoughts), a second-get model of John’s thoughts, and a third-buy model of what John thinks Zoe thinks. Discover that we need to have identical activities of lifetime to comprehend this.

Physical studying

It is apparent that all this social conversation only tends to make sense to the get-togethers involved if they have a “sense of self” and can equally sustain a design of the self of the other agent. In buy to fully grasp a person else, it is required to know oneself. An AI “self model” should really include a subjective standpoint, involving how its entire body operates (for example, its visual viewpoint relies upon upon the actual physical spot of its eyes), a in-depth map of its possess place, and a repertoire of very well comprehended abilities and steps.

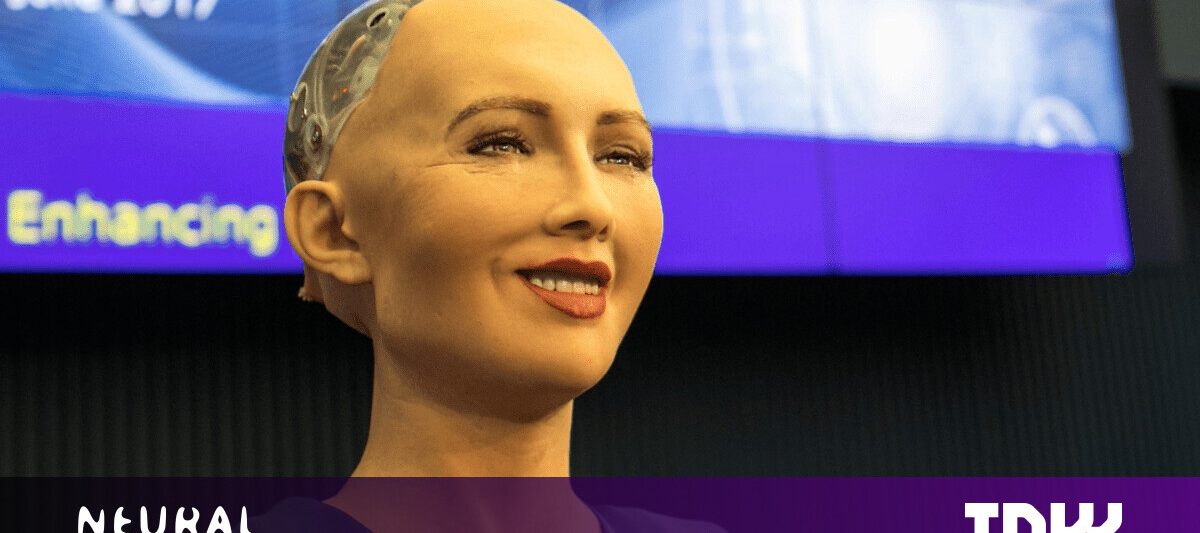

AI demands a overall body to produce a feeling of self. Phonlamai Photograph/Shutterstock

That means a bodily physique is expected in buy to floor the perception of self in concrete information and knowledge. When an action by one particular agent is noticed by yet another, it can be mutually understood via the shared factors of practical experience. This suggests social AI will require to be realized in robots with bodies. How could a computer software box have a subjective viewpoint of, and in, the physical world, the world that people inhabit? Our conversational techniques ought to be not just embedded but embodied.

A designer just can’t properly create a computer software perception-of-self for a robotic. If a subjective viewpoint were being made in from the outset, it would be the designer’s have viewpoint, and it would also want to study and cope with experiences unfamiliar to the designer. So what we require to style is a framework that supports the understanding of a subjective viewpoint.

The good thing is, there is a way out of these problems. Humans experience specifically the very same problems but they really don’t address them all at once. The first yrs of infancy exhibit extraordinary developmental development, in the course of which we discover how to control our bodies and how to perceive and practical experience objects, agents and environments. We also understand how to act and the repercussions of functions and interactions.

Exploration in the new field of developmental robotics is now discovering how robots can discover from scratch, like infants. The 1st stages include identifying the properties of passive objects and the “physics” of the robot’s planet. Later on on, robots notice and copy interactions with agents (carers), adopted by step by step far more elaborate modeling of the self in context. In my new e-book, I check out the experiments in this industry.

So though disembodied AI unquestionably has a basic limitation, foreseeable future exploration with robotic bodies could one particular working day enable create long lasting, empathetic, social interactions between AI and individuals.![]()

This post is republished from The Conversation by Mark Lee, Emeritus Professor in Computer Science, Aberystwyth University under a Innovative Commons license. Study the unique report.

Some elements of this post are sourced from:

thenextweb.com

Endpoint Security Primary Pain Point in 2020

Endpoint Security Primary Pain Point in 2020