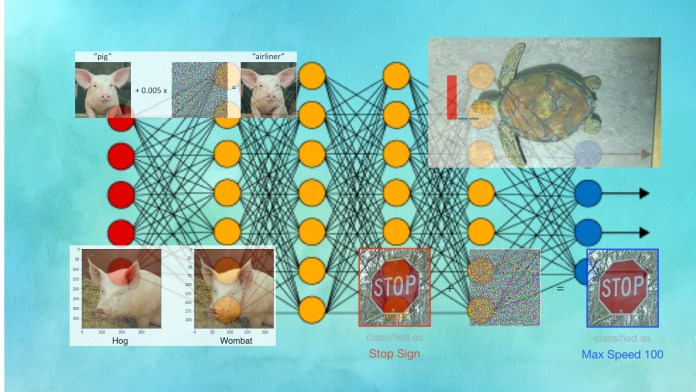

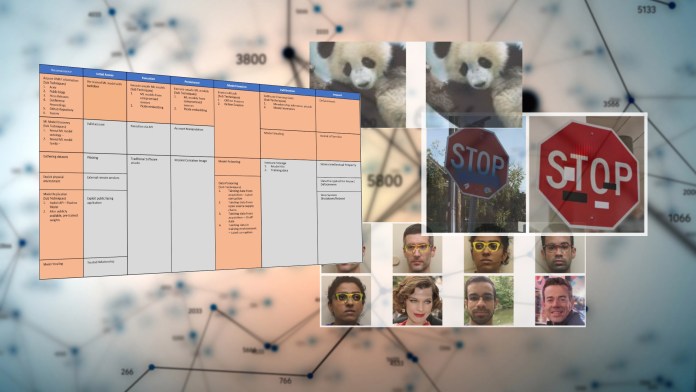

If you’ve been following news about artificial intelligence, you’ve most likely heard of or seen modified visuals of pandas and turtles and stop indicators that glimpse common to the human eye but induce AI units to behave erratically. Acknowledged as adversarial examples or adversarial assaults, these images—and their audio and textual counterparts—have grow to be a source of developing fascination and worry for the device mastering group.

But despite the rising human body of study on adversarial equipment studying, the numbers clearly show that there has been very little development in tackling adversarial assaults in real-world applications.

The speedy-growing adoption of equipment understanding makes it paramount that the tech group traces a roadmap to safe the AI systems in opposition to adversarial assaults. Normally, adversarial machine discovering can be a disaster in the producing.

AI researchers learned that by adding small black and white stickers to stop indicators, they could make them invisible to computer eyesight algorithms (Supply: arxiv.org)

What tends to make adversarial attacks distinct?

Each and every type of application has its possess special security vulnerabilities, and with new tendencies in computer software, new threats arise. For instance, as web programs with databases backends started replacing static web-sites, SQL injection attacks turned commonplace. The widespread adoption of browser-facet scripting languages gave rise to cross-site scripting attacks. Buffer overflow attacks overwrite critical variables and execute malicious code on focus on computers by taking gain of the way programming languages such as C deal with memory allocation. Deserialization attacks exploit flaws in the way programming languages these types of as Java and Python transfer information and facts amongst applications and procedures. And extra a short while ago, we’ve viewed a surge in prototype air pollution attacks, which use peculiarities in the JavaScript language to lead to erratic behavior on NodeJS servers.

In this regard, adversarial attacks are no unique than other cyberthreats. As equipment studying becomes an significant ingredient of a lot of purposes, bad actors will search for ways to plant and trigger malicious behavior in AI versions.

[Read: Meet the 4 scale-ups using data to save the planet]What can make adversarial attacks unique, however, is their character and the achievable countermeasures. For most security vulnerabilities, the boundaries are really obvious. At the time a bug is discovered, security analysts can specifically doc the situations less than which it takes place and discover the part of the resource code that is triggering it. The response is also uncomplicated. For instance, SQL injection vulnerabilities are the end result of not sanitizing user input. Buffer overflow bugs materialize when you copy string arrays without the need of placing limits on the quantity of bytes copied from the supply to the destination.

In most cases, adversarial assaults exploit peculiarities in the discovered parameters of equipment learning products. An attacker probes a goal model by meticulously building variations to its enter until it produces the ideal conduct. For occasion, by earning gradual alterations to the pixel values of an image, an attacker can lead to the convolutional neural network to transform its prediction from, say, “turtle” to “rifle.” The adversarial perturbation is typically a layer of noise that is imperceptible to the human eye.

(Be aware: in some scenarios, these as data poisoning, adversarial attacks are designed achievable through vulnerabilities in other parts of the machine finding out pipeline, this kind of as a tampered coaching details established.)

A neural network thinks this is a photograph of a rifle. The human eyesight system would under no circumstances make this error (supply: LabSix)

A neural network thinks this is a photograph of a rifle. The human eyesight system would under no circumstances make this error (supply: LabSix)

The statistical character of device mastering will make it tough to find and patch adversarial assaults. An adversarial attack that works underneath some ailments may well fail in other individuals, these as a transform of angle or lights problems. Also, you can’t issue to a line of code that is causing the vulnerability for the reason that it spread across the thousands and hundreds of thousands of parameters that represent the design.

Defenses versus adversarial attacks are also a little bit fuzzy. Just as you just can’t pinpoint a place in an AI design that is resulting in an adversarial vulnerability, you also just cannot locate a specific patch for the bug. Adversarial defenses typically involve statistical adjustments or normal improvements to the architecture of the equipment finding out model.

For instance, a person common process is adversarial education, the place scientists probe a design to deliver adversarial examples and then retrain the product on individuals examples and their suitable labels. Adversarial education readjusts all the parameters of the product to make it robust towards the types of examples it has been experienced on. But with enough rigor, an attacker can locate other sound designs to produce adversarial illustrations.

The plain reality is, we are continue to mastering how to cope with adversarial equipment finding out. Security scientists are utilized to perusing code for vulnerabilities. Now they need to learn to locate security holes in device discovering that are composed of millions of numerical parameters.

Escalating desire in adversarial device discovering

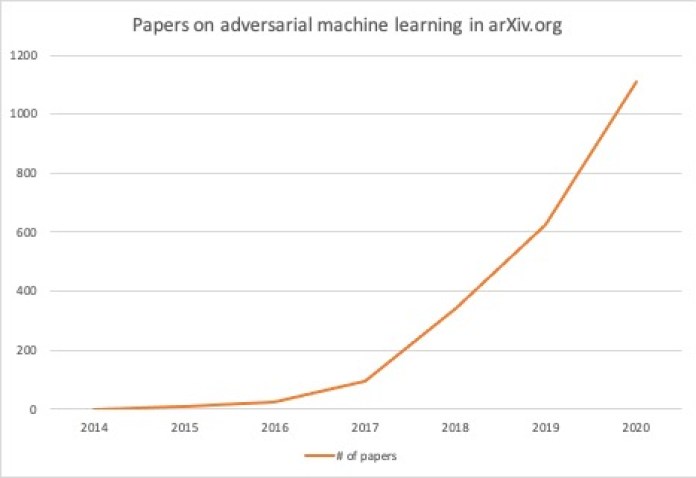

Current yrs have observed a surge in the variety of papers on adversarial attacks. To observe the trend, I searched the arXiv preprint server for papers that mention “adversarial attacks” or “adversarial examples” in the summary portion. In 2014, there had been zero papers on adversarial machine studying. In 2020, close to 1,100 papers on adversarial illustrations and assaults have been submitted to arxiv.

From 2014 to 2020, arXiv.org has long gone from zero papers on adversarial machine mastering to 1,100 papers in one yr.

From 2014 to 2020, arXiv.org has long gone from zero papers on adversarial machine mastering to 1,100 papers in one yr.

Adversarial attacks and protection solutions have also come to be a key spotlight of popular AI conferences these kinds of as NeurIPS and ICLR. Even cybersecurity conferences these types of as DEF CON, Black Hat, and Usenix have begun featuring workshops and shows on adversarial assaults.

The analysis introduced at these conferences displays remarkable development in detecting adversarial vulnerabilities and building defense approaches that can make equipment finding out versions a lot more strong. For occasion, researchers have located new methods to safeguard device understanding models in opposition to adversarial assaults using random switching mechanisms and insights from neuroscience.

It is really worth noting, even so, that AI and security conferences concentrate on reducing edge research. And there’s a sizeable hole amongst the work presented at AI conferences and the practical perform finished at organizations just about every day.

The lackluster reaction to adversarial attacks

Alarmingly, even with developing fascination in and louder warnings on the risk of adversarial assaults, there’s pretty little exercise close to tracking adversarial vulnerabilities in genuine-environment applications.

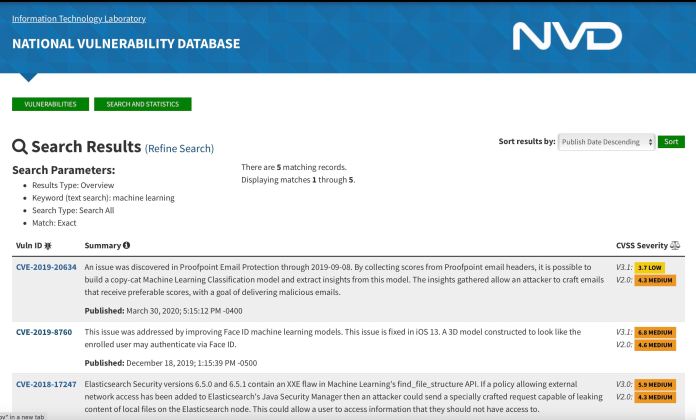

I referred to quite a few resources that monitor bugs, vulnerabilities, and bug bounties. For occasion, out of extra than 145,000 documents in the NIST Nationwide Vulnerability Database, there are no entries on adversarial attacks or adversarial examples. A look for for “machine learning” returns five outcomes. Most of them are cross-web-site scripting (XSS) and XML external entity (XXE) vulnerabilities in units that have device finding out elements. A single of them regards a vulnerability that makes it possible for an attacker to build a duplicate-cat model of a equipment learning design and get insights, which could be a window to adversarial assaults. But there are no direct reports on adversarial vulnerabilities. A research for “deep learning” displays a single critical flaw filed in November 2017. But all over again, it’s not an adversarial vulnerability but rather a flaw in an additional component of a deep mastering system.

The Nationwide Vulnerability Database contains quite minimal facts on adversarial assaults

The Nationwide Vulnerability Database contains quite minimal facts on adversarial assaults

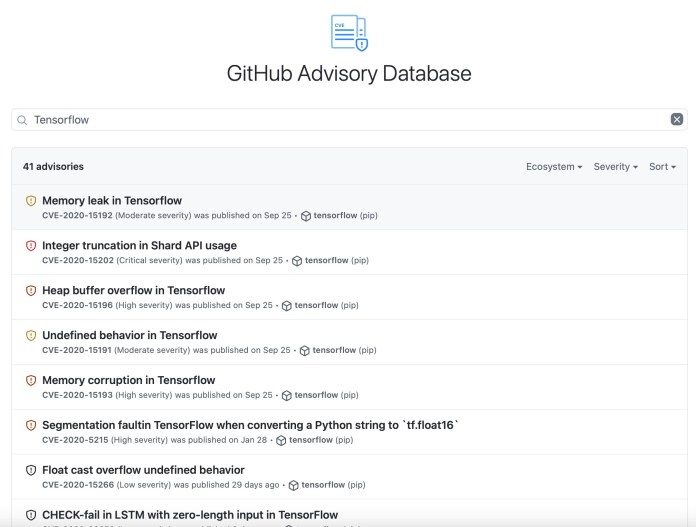

I also checked GitHub’s Advisory databases, which tracks security and bug fixes on projects hosted on GitHub. Search for “adversarial attacks,” “adversarial illustrations,” “machine studying,” and “deep learning” yielded no benefits. A lookup for “TensorFlow” yields 41 records, but they’re mostly bug reports on the codebase of TensorFlow. There’s absolutely nothing about adversarial assaults or concealed vulnerabilities in the parameters of TensorFlow products.

This is noteworthy for the reason that GitHub already hosts many deep learning models and pretrained neural networks.

GitHub Advisory contains no documents on adversarial attacks.

GitHub Advisory contains no documents on adversarial attacks.

Last but not least, I checked HackerOne, the system a lot of businesses use to operate bug bounty programs. Below way too, none of the studies contained any point out of adversarial attacks.

Although this may well not be a really precise evaluation, the reality that none of these resources have just about anything on adversarial attacks is really telling.

The growing threat of adversarial assaults

Adversarial vulnerabilities are deeply embedded in the many parameters of equipment studying types, which helps make it tough to detect them with standard security equipment.

Adversarial vulnerabilities are deeply embedded in the many parameters of equipment studying types, which helps make it tough to detect them with standard security equipment.

Automatic defense is another place that is worthy of speaking about. When it will come to code-based vulnerabilities Developers have a huge established of defensive resources at their disposal.

Static evaluation instruments can assistance builders find vulnerabilities in their code. Dynamic testing tools take a look at an software at runtime for susceptible patterns of behavior. Compilers presently use many of these methods to monitor and patch vulnerabilities. Now, even your browser is geared up with tools to uncover and block maybe malicious code in client-side script.

At the very same time, companies have discovered to merge these resources with the correct guidelines to implement safe coding techniques. Quite a few organizations have adopted techniques and techniques to rigorously test applications for known and prospective vulnerabilities prior to producing them obtainable to the community. For instance, GitHub, Google, and Apple make use of these and other equipment to vet the tens of millions of apps and tasks uploaded on their platforms.

But the tools and techniques for defending equipment finding out devices from adversarial assaults are nonetheless in the preliminary stages. This is partly why we’re observing really number of reports and advisories on adversarial assaults.

Meanwhile, another stressing craze is the growing use of deep mastering products by developers of all degrees. Ten a long time in the past, only people who had a full knowledge of machine finding out and deep learning algorithms could use them in their applications. You had to know how to set up a neural network, tune the hyperparameters through instinct and experimentation, and you also desired obtain to the compute resources that could teach the design.

But these days, integrating a pre-qualified neural network into an application is incredibly quick.

For instance, PyTorch, which is a person of the top Python deep understanding platforms, has a tool that enables device discovering engineers to publish pretrained neural networks on GitHub and make them accessible to developers. If you want to combine an graphic classifier deep studying design into your software, you only need a rudimentary expertise of deep mastering and PyTorch.

Due to the fact GitHub has no technique to detect and block adversarial vulnerabilities, a destructive actor could quickly use these types of tools to publish deep studying styles that have concealed backdoors and exploit them following 1000’s of developers integrate them in their programs.

How to tackle the danger of adversarial attacks

Understandably, provided the statistical mother nature of adversarial attacks, it’s tough to handle them with the exact same approaches applied versus code-primarily based vulnerabilities. But thankfully, there have been some beneficial developments that can tutorial future techniques.

The Adversarial ML Threat Matrix, released last thirty day period by scientists at Microsoft, IBM, Nvidia, MITRE, and other security and AI businesses, delivers security scientists with a framework to locate weak spots and potential adversarial vulnerabilities in software package ecosystems that contain equipment understanding parts. The Adversarial ML Threat Matrix follows the ATT&CK framework, a recognized and dependable format among the security researchers.

One more valuable job is IBM’s Adversarial Robustness Toolbox, an open up-supply Python library that delivers instruments to evaluate device discovering products for adversarial vulnerabilities and aid developers harden their AI methods.

These and other adversarial protection equipment that will be formulated in the long term need to have to be backed by the appropriate insurance policies to make confident equipment studying styles are safe and sound. Software program platforms this kind of as GitHub and Google Engage in will have to build treatments and combine some of these applications into the vetting approach of purposes that include things like machine studying products. Bug bounties for adversarial vulnerabilities can also be a superior measure to make certain the device learning units made use of by millions of consumers are sturdy.

New restrictions for the security of machine understanding methods could possibly also be essential. Just as the software that handles delicate functions and info is expected to conform to a set of benchmarks, machine discovering algorithms made use of in critical apps this sort of as biometric authentication and medical imaging need to be audited for robustness towards adversarial attacks.

As the adoption of device understanding carries on to increase, the threat of adversarial assaults is getting to be extra imminent. Adversarial vulnerabilities are a ticking timebomb. Only a systematic reaction can defuse it.

This short article was at first printed by Ben Dickson on TechTalks, a publication that examines developments in technology, how they have an affect on the way we are living and do company, and the complications they resolve. But we also talk about the evil aspect of technology, the darker implications of new tech and what we want to seem out for. You can study the primary article in this article.

Ben Dickson

Read more

Some parts of this article are sourced from:

thenextweb.com

Social Media Neuters Trump’s Accounts After Fans Storm Capitol

Social Media Neuters Trump’s Accounts After Fans Storm Capitol