Shedding slumber more than Generative-AI apps? You happen to be not by yourself or mistaken. In accordance to the Astrix Security Investigation Team, mid sizing companies already have, on regular, 54 Generative-AI integrations to core programs like Slack, GitHub and Google Workspace and this amount is only anticipated to grow. Carry on studying to have an understanding of the probable risks and how to lower them.

Ebook a Generative-AI Discovery session with Astrix Security’s experts (cost-free – no strings hooked up – agentless & zero friction)

“Hey ChatGPT, critique and enhance our resource code”

“Hey Jasper.ai, make a summary email of all our net new shoppers from this quarter”

“Hey Otter.ai, summarize our Zoom board assembly”

In this period of economic turmoil, organizations and staff members alike are constantly looking for instruments to automate do the job processes and improve effectiveness and productiveness by connecting 3rd bash apps to main enterprise systems these kinds of as Google workspace, Slack and GitHub by way of API keys, OAuth tokens, services accounts and much more. The increase of Generative-AI applications and GPT solutions exacerbates this issue, with workers of all departments speedily incorporating the most recent and best AI applications to their productiveness arsenal, without the need of the security team’s knowledge.

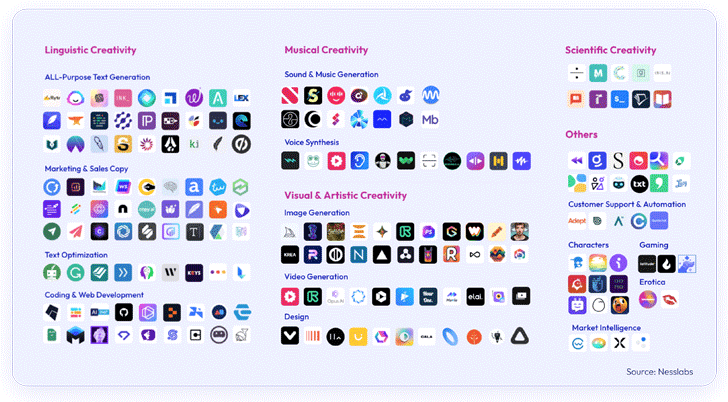

From engineering apps this sort of as code review and optimization to advertising, layout and product sales applications these kinds of as content & movie development, impression development and email automation apps. With ChatGPT becoming the speediest escalating app in history, and AI-powered apps remaining downloaded 1506% extra than very last year, the security threats of applying, and even worse, connecting these generally unvetted apps to organization main methods is currently triggering sleepless nights for security leaders.

Your organization’s application-to-app connectivity

Your organization’s application-to-app connectivity

The challenges of Gen-AI applications

AI-centered apps present two key issues for security leaders:

1. Data Sharing via apps like ChatGPT: The power of AI lies in data, but this really strength can be a weakness if mismanaged. Staff may possibly unintentionally share sensitive, organization-critical information including shoppers PII and intellectual home like code. These leaks can expose organizations to facts breaches, competitive shortcomings and compliance violations. And this is not a fable – just talk to Samsung.

The Samsung and ChatGPT leaks – a situation for caution

Samsung reported a few various leaks of really sensitive information and facts by a few workers that applied ChatGPT for productiveness uses. One particular of the staff members shared a confidential source code to check out it for problems, an additional shared code for code optimization, and the 3rd shared a recording of a assembly to transform into meeting notes for a presentation. All this facts is now utilized by ChatGPT to practice the AI products and can be shared throughout the web.

2. Unverified Generative-AI applications: Not all generative AI apps come from verified sources. Astrix’s current exploration reveals that workers are more and more connecting these AI-primarily based apps (that commonly have superior-privilege entry) to core programs like GitHub, Salesforce and these types of – elevating major security worries.

The huge array of Generative AI apps

The huge array of Generative AI apps

E-book a Generative-AI Discovery session with Astrix Security’s specialists (free of charge – no strings connected – agentless & zero friction)

Actual lifestyle case in point of a risky Gen-AI integration:

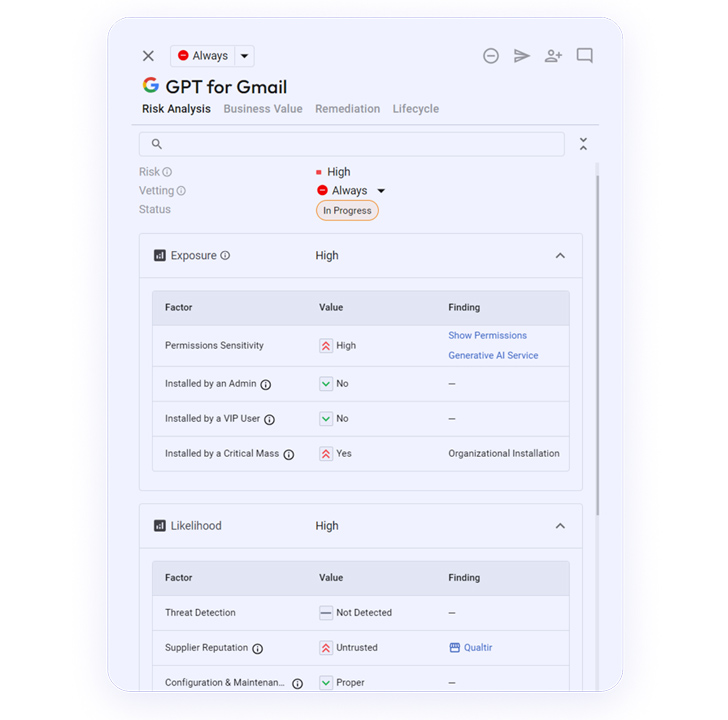

In the photos below you can see the specifics from the Astrix platform about a dangerous Gen-AI integration that connects to the organization’s Google Workspace environment.

This integration, Google Workspace Integration “GPT For Gmail”, was created by an untrusted developer and granted with substantial-permissions to the organization’s Gmail accounts:

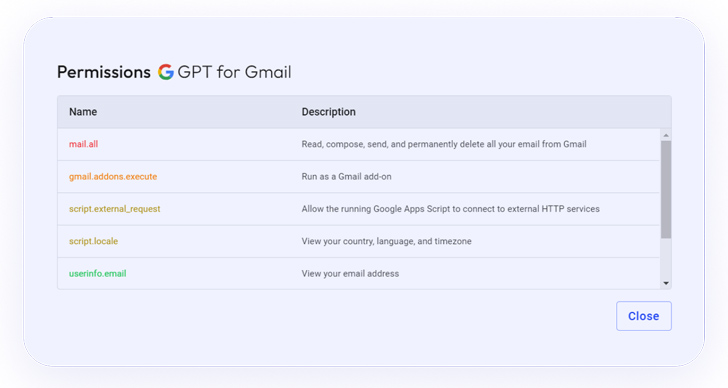

Among the scopes of the permissions granted to the integration is “mail.all”, which enables the 3rd celebration app to read through, compose, deliver and delete emails – a incredibly delicate privilege:

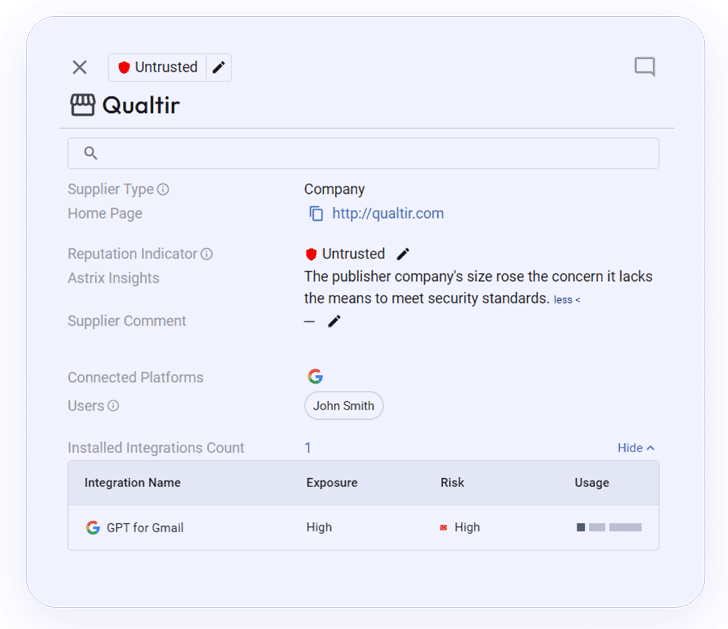

Information about the integration’s provider, which is untrusted:

How Astrix assists minimizing your AI pitfalls

To safely navigate the remarkable but elaborate landscape of AI, security groups want sturdy non-human id management in buy to get visibility into the 3rd-bash expert services your workforce are connecting, as perfectly as manage over permissions and properly examine likely security challenges. With Astrix you now can:

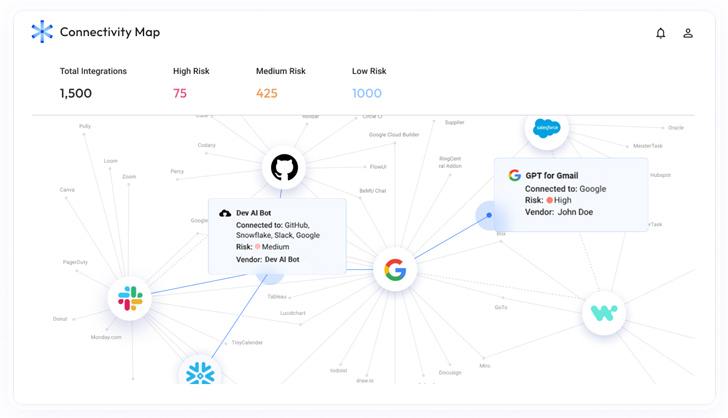

The Astrix Connectivity map

The Astrix Connectivity map

- Get a whole inventory of all AI-tools that your staff members use and entry your core systems, and understand the challenges involved with them.

- Clear away security bottlenecks with automated security guardrails: comprehend the small business value of each and every non-human link together with the utilization stage (frequency, previous routine maintenance, utilization quantity), the relationship operator, who in the firm takes advantage of the integration and the marketplace information.

- Minimize your attack floor – Make sure all AI-dependent non-human identities accessing your main methods have minimum privileged obtain, remove unused connections, and untrusted app suppliers.

- Detect anomalous exercise and remediate challenges: Astrix analyzes and detects destructive conduct this sort of as stolen tokens, interior application abuse and untrusted suppliers in serious time by means of IP, consumer agent and obtain data anomalies.

- Remediate speedier: Astrix takes the load off your security staff with automatic remediation workflows as effectively as instructing conclude-buyers on resolving their security issues independently.

Guide a Generative-AI Discovery session with Astrix Security’s experts (free – no strings connected – agentless & zero friction)

Observed this short article intriguing? Follow us on Twitter and LinkedIn to read through much more unique content material we publish.

Some parts of this article are sourced from:

thehackernews.com

Alert: Million of GitHub Repositories Likely Vulnerable to RepoJacking Attack

Alert: Million of GitHub Repositories Likely Vulnerable to RepoJacking Attack