With equipment studying turning out to be increasingly well-liked, just one issue that has been stressing experts is the security threats the technology will entail. We are nevertheless exploring the alternatives: The breakdown of autonomous driving devices? Inconspicuous theft of sensitive facts from deep neural networks? Failure of deep learning–based biometric authentication? Refined bypass of content moderation algorithms?

Meanwhile, equipment learning algorithms have previously located their way into critical fields this sort of as finance, well being care, and transportation, exactly where security failures can have significant repercussion.

Parallel to the greater adoption of device understanding algorithms in diverse domains, there has been developing curiosity in adversarial device mastering, the discipline of analysis that explores methods mastering algorithms can be compromised.

And now, we at last have a framework to detect and answer to adversarial assaults versus machine learning units. Called the Adversarial ML Menace Matrix, the framework is the consequence of a joint effort in between AI researchers at 13 companies, which include Microsoft, IBM, Nvidia, and MITRE.

Though even now in early stages, the ML Menace Matrix gives a consolidated check out of how malicious actors can take gain of weaknesses in equipment discovering algorithms to target organizations that use them. And its crucial concept is that the menace of adversarial machine mastering is genuine and companies should really act now to safe their AI systems.

Applying ATT&CK to machine mastering

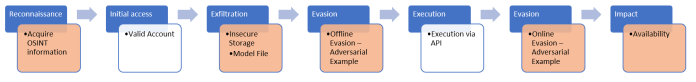

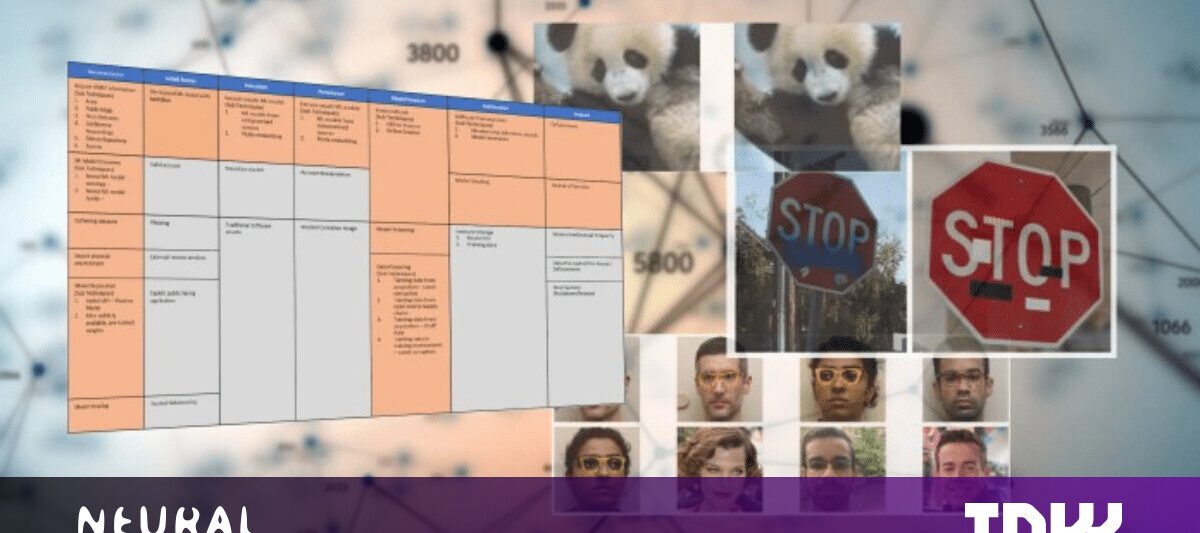

The Adversarial ML Threat Matrix is presented in the fashion of ATT&CK, a tried using-and-tested framework produced by MITRE to offer with cyber-threats in company networks. ATT&CK provides a desk that summarizes distinct adversarial ways and the kinds of strategies that risk actors accomplish in each individual location.

Considering the fact that its inception, ATT&CK has become a preferred information for cybersecurity professionals and threat analysts to uncover weaknesses and speculate on attainable attacks. The ATT&CK structure of the Adversarial ML Threat Matrix helps make it much easier for security analysts to understand the threats of device mastering techniques. It is also an obtainable doc for machine learning engineers who may possibly not be deeply acquainted with cybersecurity operations.

“Many industries are going through electronic transformation and will likely undertake machine mastering technology as aspect of services/solution offerings, such as generating substantial-stakes selections,” Pin-Yu Chen, AI researcher at IBM, told TechTalks in composed remarks. “The idea of ‘system’ has advanced and develop into a lot more difficult with the adoption of device learning and deep finding out.”

For occasion, Chen says, an automated money loan application recommendation can transform from a transparent rule-based system to a black-box neural network-oriented procedure, which could have considerable implications on how the program can be attacked and secured.

“The adversarial risk matrix evaluation (i.e., the examine) bridges the gap by giving a holistic see of security in emerging ML-centered methods, as effectively as illustrating their triggers from traditional usually means and new challenges induce by ML,” Chen says.

The Adversarial ML Threat Matrix brings together acknowledged and documented tactics and methods used in attacking electronic infrastructure with strategies that are one of a kind to machine learning systems. Like the unique ATT&CK table, each column represents a person tactic (or location of action) such as reconnaissance or model evasion, and each mobile signifies a precise system.

For instance, to attack a machine understanding procedure, a malicious actor should first assemble information and facts about the fundamental model (reconnaissance column). This can be finished by way of the accumulating of open-source info (arXiv papers, GitHub repositories, press releases, and so forth.) or by experimentation with the software programming interface that exposes the model.

The complexity of device learning security

Adversarial vulnerabilities are deeply embedded in the several parameters of machine learning models, which would make it tricky to detect them with standard security instruments.

Adversarial vulnerabilities are deeply embedded in the several parameters of machine learning models, which would make it tricky to detect them with standard security instruments.

Every single new sort of technology comes with its unique security and privacy implications. For instance, the advent of web applications with databases backends launched the idea SQL injection. Browser scripting languages these types of as JavaScript ushered in cross-internet site scripting assaults. The internet of things (IoT) launched new ways to create botnets and conduct dispersed denial of support (DDoS) assaults. Smartphones and cellular apps generate new attack vectors for malicious actors and spying companies.

The security landscape has evolved and proceeds to acquire to deal with just about every of these threats. We have anti-malware application, web software firewalls, intrusion detection and prevention devices, DDoS safety answers, and numerous far more tools to fend off these threats.

For instance, security applications can scan binary executables for the electronic fingerprints of destructive payloads, and static assessment can locate vulnerabilities in software code. Numerous platforms this kind of as GitHub and Google Application Shop currently have built-in quite a few of these applications and do a good position at acquiring security holes in the program they house.

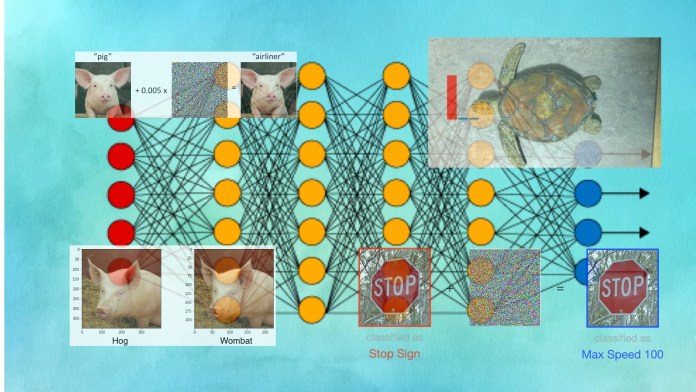

But in adversarial assaults, malicious behavior and vulnerabilities are deeply embedded in the thousands and millions of parameters of deep neural networks, which is both equally tricky to locate and over and above the abilities of present-day security resources.

“Traditional program security ordinarily does not include the device finding out part because it’s a new piece in the rising procedure,” Chen suggests, incorporating that adopting equipment finding out into the security landscape gives new insights and risk evaluation.

The Adversarial ML Danger Matrix will come with a set of case studies of assaults that entail traditional security vulnerabilities, adversarial machine understanding, and mixtures of equally. What is important is that contrary to the popular belief that adversarial attacks are limited to lab environments, the circumstance studies demonstrate that output device discovering method can and have been compromised with adversarial attacks.

For instance, in 1 circumstance analyze, the security staff at Microsoft Azure made use of open up-source info to get details about a focus on device understanding design. They then applied a valid account in the server to get hold of the equipment finding out model and its coaching details. They utilized this information to discover adversarial vulnerabilities in the product and acquire assaults versus the API that uncovered its functionality to the community.

Attackers can leverage a mixture of device learning–specific techniques and traditional attack vectors to compromise AI programs.

Attackers can leverage a mixture of device learning–specific techniques and traditional attack vectors to compromise AI programs.

Other circumstance studies show how attackers can compromise many element of the equipment studying pipeline and the software program stack to conduct data poisoning attacks, bypass spam detectors, or drive AI devices to expose private info.

The matrix and these case scientific tests can guideline analysts in getting weak spots in their software program and can guide security tool sellers in creating new resources to protect device discovering techniques.

“Inspecting a one dimension (machine finding out vs standard software package security) only offers an incomplete security analysis of the procedure as a total,” Chen suggests. “Like the aged expressing goes: security is only as strong as its weakest website link.”

Machine learning developers need to have to pay focus to adversarial threats

However, developers and adopters of equipment finding out algorithms are not getting the required actions to make their styles strong against adversarial assaults.

“The latest progress pipeline is basically making certain a design qualified on a education set can generalize well to a exam set, when neglecting the reality that the design is often overconfident about the unseen (out-of-distribution) information or maliciously embbed Trojan patten in the education set, which features unintended avenues to evasion attacks and backdoor attacks that an adversary can leverage to handle or misguide the deployed model,” Chen states. “In my look at, equivalent to car or truck design progress and manufacturing, a comprehensive ‘in-house collision test’ for different adversarial treats on an AI model should really be the new norm to practice to much better understand and mitigate probable security threats.”

In his function at IBM Exploration, Chen has aided develop various methods to detect and patch adversarial vulnerabilities in device learning models. With the introduction Adversarial ML Threat Matrix, the initiatives of Chen and other AI and security scientists will place builders in a greater posture to make safe and strong equipment studying techniques.

“My hope is that with this research, the product builders and machine finding out scientists can pay a lot more notice to the security (robustness) aspect of the model and seeking further than a one efficiency metric these types of as accuracy,” Chen states.

This write-up was at first revealed by Ben Dickson on TechTalks, a publication that examines traits in technology, how they have an impact on the way we dwell and do enterprise, and the difficulties they solve. But we also discuss the evil side of technology, the darker implications of new tech and what we require to appear out for. You can browse the initial post listed here.

Ben Dickson

Go through extra

Some parts of this article are sourced from:

thenextweb.com

How to make AI work for your business

How to make AI work for your business