Vicky Just/University of Tub

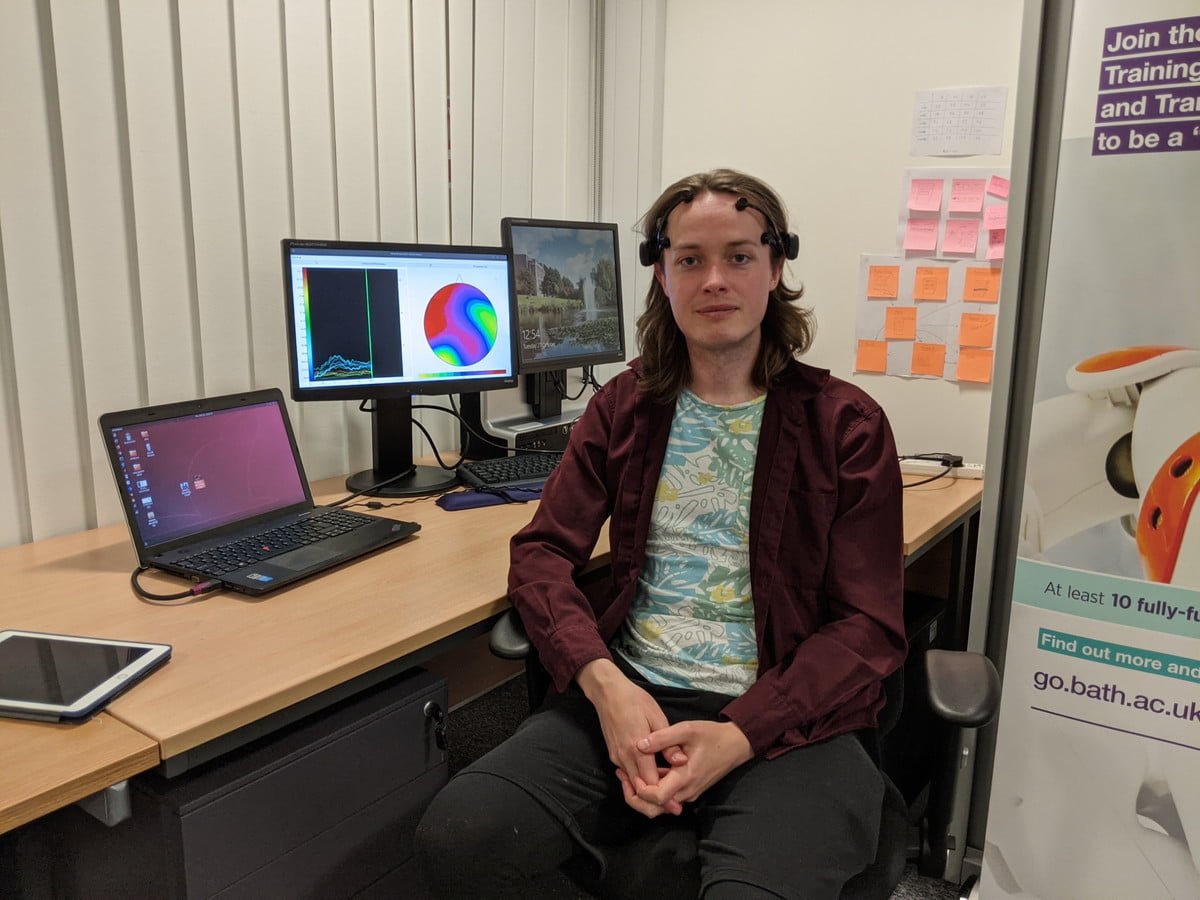

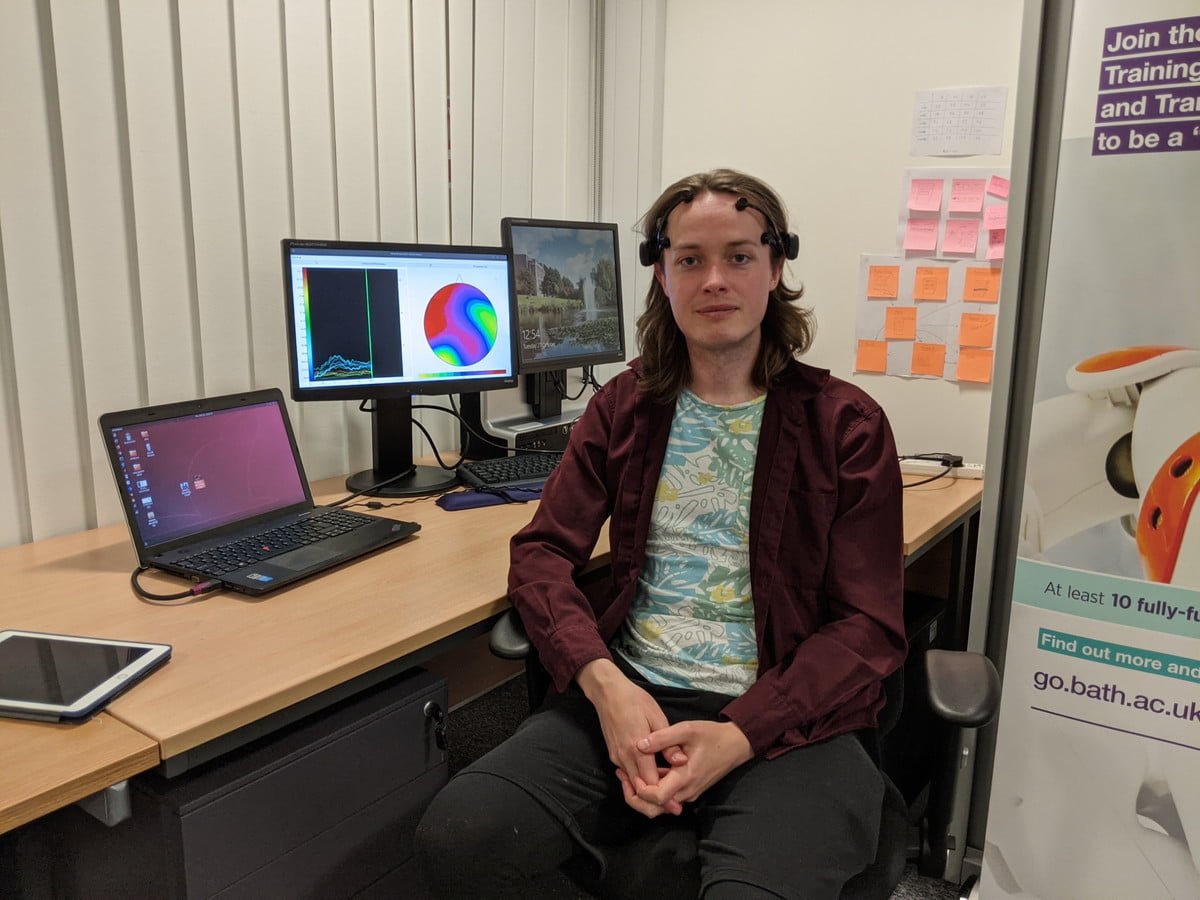

“In a nutshell,” said Scott Wellington, “we’re hoping to generate a technology that can get your imagined speech — that is, you feel of a term or a sentence, with out going or talking at all — and translate your brain alerts into synthesized speech of that very same term or sentence.”

That’s very a mission, but Wellington, a Ph.D. researcher at the University of Bath’s Center for Accountable, Transparent and Liable Artificial Intelligence, may well just be up to the task.

For the earlier numerous several years, by using his past do the job at the College of Edinburgh and a startup named SpeakUnique, Wellington has been doing work on an ambitious, but most likely match-altering, job: Creating personalized synthetic voices for those people who have impaired speech or totally lost the ability to talk as a outcome of neurodegenerative disorders like Motor Neurone Sickness (MND).

“The purpose is to develop a new system that allows additional fluent communication by both supporting or, even much better, entirely changing the will need to style out what you want to communicate, by employing the mind signal to do the ‘typing’ alternatively.”

Synthetic voices for people today with possibly debilitating conditions like MND have been about for a long time. Famously, the late theoretical physicist Stephen Hawking communicated using a synthesized laptop voice, established for him by a Massachusetts Institute of Technology engineer named Dennis Klatt, as far back as 1984. The voice, a default male named “Perfect Paul,” could be operated working with a handheld clicker that would enable him to decide on terms from a computer system. Later on, when Hawking lost the use of his palms, he switched to a procedure that detected his facial motion.

Vicky Just/College of Tub

Vicky Just/College of Tub

Wellington’s function would be a step ahead from this. For a person factor, where recordings exist or suited audio areas could be produced, he could piece collectively a synthetic personalized voice that seems like the human being it is currently being employed for. Moreover, this voice could be managed completely as a result of the user’s views — all working with a humble, commercially obtainable gamer’s headset.

Promising developments

“There have currently been some promising developments in the area from scientists all around the environment, but these have all used a course of action called electrocorticography, which necessitates a craniotomy,” Wellington said.

A craniotomy, as he factors out, is invasive mind surgery. The goal of his operate at the College of Bathtub is to reach the outcome of “imagined speech recognition,” but with out the want for another person to lower open up your head and plant sensors on to the area of your brain.

“For people who have missing their all-natural speech, just one of the most significant results in of aggravation is the lack of ability to connect their feelings to pals and household with the exact same pace and naturalness as they experienced beforehand,” he stated. “For occasion, for persons in sophisticated phases of MND, eye-tracking systems can allow men and women with severely impaired motor management to use text-to-speech techniques to communicate at all over 10 terms a moment, and that’s if they are fluent users of the technology. You and I can speak 10 words in a couple of seconds. You can see why this is one particular of the most significant results in of frustration for men and women with motor impairment who have lost their speech.”

In the College of Bath set up, the gaming headset utilized is geared up with an EEG (electroencephalography) method to detect the wearers’ brain waves. These are then processed by a personal computer that uses neural networks and deep studying to establish the supposed speech of the consumer.

“We’ve been equipped to translate these imagined seems with a promising degree of accuracy.”

“The intention is to develop a new strategy that lets extra fluent communication by either supporting or, even far better, entirely changing the want to type out what you want to talk, by using the brain sign to do the ‘typing’ in its place,” Wellington claimed. “With the latest developments in engineering, machine finding out, and synthetic intelligence, I believe that we’re at the stage to get started to make this a reality.”

To practice the method, volunteers wore the EEG product even though a recording of their individual speech was played for them. At the exact time, they experienced to picture indicating the audio, as properly as vocalize the seem. Whilst it would be correct to describe the program as looking at ideas, it would however have to have the consumer to silently verbalize the words they wished to say. (The furthermore facet of this is that there is no risk of it unintentionally looking through a wearers’ most private feelings.)

The future’s shiny, but control anticipations

Wellington was clear that he wishes to “manage expectations.” Getting the noisy sign of mind waves and trying to pick up the all-essential sign contained in it is hard. He likened it to seeking to have a phone discussion with a individual who is outside the house in heavy wind — or even a hurricane. “If they’re shouting the same phrase around and about, indeed, likely you will get it,” he reported. “But a pure, total sentence? Most likely not.”

Vicky Just/College of Tub

Vicky Just/College of Tub

This will ideally adjust as the challenge innovations and they get much better at extracting info from the mind sign. New machine discovering techniques should really force the capabilities of gaming headsets for far better imagined purely natural speech reception. One particular challenge, which will show worthwhile in the finish, is that the scientists want to make confident that whichever components they use is cost-effective, sensible, and cellular.

“[So far] we have managed to obtain some good results in decoding imagined speech appears from the brain sign,” Wellington claimed. “That is, imagine you have been sounding out the English language phonically, as youngsters do in university: ‘Aah,’ ‘buh,’ ‘kuh,’ ‘duh,’ ‘ehh,’ ‘guh,’ and so forth. We have been equipped to translate these imagined sounds with a promising diploma of precision. Of class, this is considerably from organic speech, but does already allow for for a brain-pc interface that can translate a small ‘closed’ vocabulary of distinct words quite reliably. For case in point, if you preferred the product to talk, from your views, the text for ‘up,’ ‘down,’ ‘left,’ ‘right,’ ‘start,’ ‘stop,’ ‘back,’ ‘forwards,’ [that would be possible].”

Wellington pointed out that he is enthusiastic about developments like Elon Musk’s Neuralink hardware, a “brain chip” that could be implanted beneath the cranium, which could prove extremely transformative for perform these as this. “As you can imagine, I was still left seeking to know what we could attain if these a unit were being implanted around the speech- and language-processing locations of the mind,” he claimed. “There’s absolutely an thrilling long run ahead for this analysis!”

The get the job done was introduced at the Interspeech virtual convention in late Oct 2020.

Vicky Just/College of Bathtub

Vicky Just/College of Bathtub

“In a nutshell,” said Scott Wellington, “we’re hoping to produce a technology that can acquire your imagined speech — that is, you feel of a term or a sentence, with out shifting or talking at all — and translate your brain indicators into synthesized speech of that similar term or sentence.”

That is really a mission, but Wellington, a Ph.D. researcher at the University of Bath’s Middle for Accountable, Clear and Dependable Synthetic Intelligence, may possibly just be up to the job.

For the earlier a number of many years, through his past operate at the University of Edinburgh and a startup named SpeakUnique, Wellington has been doing work on an formidable, but possibly sport-transforming, venture: Producing customized artificial voices for people who have impaired speech or entirely shed the ability to talk as a outcome of neurodegenerative problems like Motor Neurone Sickness (MND).

“The purpose is to build a new procedure that lets more fluent conversation by either supporting or, even better, entirely replacing the need to type out what you want to converse, by making use of the brain sign to do the ‘typing’ in its place.”

Artificial voices for folks with perhaps debilitating circumstances like MND have been close to for several years. Famously, the late theoretical physicist Stephen Hawking communicated employing a synthesized personal computer voice, created for him by a Massachusetts Institute of Technology engineer named Dennis Klatt, as far again as 1984. The voice, a default male named “Perfect Paul,” could be operated utilizing a handheld clicker that would permit him to select words from a computer. Afterwards, when Hawking dropped the use of his hands, he switched to a program that detected his facial motion.

Vicky Just/University of Tub

Vicky Just/University of Tub

Wellington’s function would be a action ahead from this. For 1 factor, in which recordings exist or appropriate sound sections could be built, he could piece collectively a artificial individualized voice that sounds like the man or woman it is remaining applied for. Furthermore, this voice could be managed solely via the user’s thoughts — all making use of a humble, commercially accessible gamer’s headset.

Promising developments

“There have presently been some promising developments in the discipline from researchers all-around the planet, but these have all utilised a method named electrocorticography, which necessitates a craniotomy,” Wellington stated.

A craniotomy, as he points out, is invasive brain surgical procedures. The goal of his get the job done at the University of Bathtub is to accomplish the result of “imagined speech recognition,” but without having the need for a person to reduce open your head and plant sensors on to the surface area of your mind.

“For folks who have missing their organic speech, one of the most significant results in of annoyance is the inability to connect their views to close friends and family with the very same velocity and naturalness as they had formerly,” he stated. “For occasion, for men and women in superior stages of MND, eye-monitoring systems can allow people with severely impaired motor command to use text-to-speech programs to connect at all-around 10 phrases a minute, and that is if they’re fluent people of the technology. You and I can communicate 10 words and phrases in a couple of seconds. You can see why this is one of the most significant triggers of irritation for men and women with motor impairment who have lost their speech.”

In the University of Tub set up, the gaming headset employed is outfitted with an EEG (electroencephalography) procedure to detect the wearers’ mind waves. These are then processed by a computer system that employs neural networks and deep learning to identify the intended speech of the consumer.

“We’ve been in a position to translate these imagined seems with a promising diploma of accuracy.”

“The target is to produce a new procedure that lets additional fluent communication by either supporting or, even improved, completely replacing the need to type out what you want to converse, by working with the brain signal to do the ‘typing’ rather,” Wellington claimed. “With the latest developments in engineering, device understanding, and synthetic intelligence, I believe we’re at the phase to commence to make this a actuality.”

To prepare the process, volunteers wore the EEG machine when a recording of their personal speech was performed for them. At the similar time, they experienced to picture saying the seem, as properly as vocalize the seem. Though it would be correct to explain the program as looking through thoughts, it would however need the user to silently verbalize the phrases they wanted to say. (The as well as facet of this is that there is no risk of it unintentionally reading through a wearers’ most personal feelings.)

The future’s bright, but handle anticipations

Wellington was very clear that he wishes to “manage anticipations.” Taking the noisy signal of brain waves and making an attempt to decide on up the all-significant signal contained in it is tricky. He likened it to hoping to have a phone conversation with a person who is outdoors in large wind — or even a hurricane. “If they are shouting the same term more than and above, yes, possibly you are going to get it,” he stated. “But a purely natural, entire sentence? Possibly not.”

Vicky Just/College of Bathtub

Vicky Just/College of Bathtub

This will hopefully modify as the project developments and they get much better at extracting data from the brain signal. New equipment finding out tactics ought to press the abilities of gaming headsets for far better imagined purely natural speech reception. One particular obstacle, which will establish worthwhile in the finish, is that the scientists want to make absolutely sure that no matter what components they use is inexpensive, realistic, and cellular.

“[So far] we’ve managed to attain some results in decoding imagined speech appears from the brain sign,” Wellington reported. “That is, think about you have been sounding out the English language phonically, as small children do in school: ‘Aah,’ ‘buh,’ ‘kuh,’ ‘duh,’ ‘ehh,’ ‘guh,’ and so forth. We’ve been in a position to translate these imagined appears with a promising degree of precision. Of course, this is much from natural speech, but does already make it possible for for a brain-computer system interface that can translate a compact ‘closed’ vocabulary of distinct words fairly reliably. For case in point, if you required the product to communicate, from your thoughts, the words and phrases for ‘up,’ ‘down,’ ‘left,’ ‘right,’ ‘start,’ ‘stop,’ ‘back,’ ‘forwards,’ [that would be possible].”

Wellington mentioned that he is excited about developments like Elon Musk’s Neuralink components, a “brain chip” that could be implanted beneath the skull, which could prove incredibly transformative for operate this kind of as this. “As you can think about, I was remaining seeking to know what we could accomplish if this kind of a gadget ended up implanted around the speech- and language-processing regions of the mind,” he claimed. “There’s certainly an exciting upcoming in advance for this study!”

The operate was introduced at the Interspeech digital meeting in late October 2020.

Some parts of this article are sourced from:

digitaltrends.com

How to protect your AI systems against adversarial machine learning

How to protect your AI systems against adversarial machine learning