If I explained to you to consider a thing amongst a horse and a bird—say, a traveling horse—would you need to have to see a concrete instance? These types of a creature does not exist, but practically nothing prevents us from utilizing our imagination to produce one: the Pegasus.

The human intellect has all varieties of mechanisms to make new principles by combining summary and concrete knowledge it has of the actual earth. We can imagine current matters that we could have hardly ever viewed (a horse with a very long neck — a giraffe), as properly as factors that do not exist in real existence (a winged serpent that breathes hearth — a dragon). This cognitive flexibility lets us to master new matters with several and occasionally no new examples.

In contrast, equipment finding out and deep finding out, the present leading fields of artificial intelligence, are known to demand quite a few examples to understand new responsibilities, even when they are similar to factors they previously know.

Beating this obstacle has led to a host of research operate and innovation in machine studying. And despite the fact that we are however much from making artificial intelligence that can replicate the brain’s potential for comprehending, the development in the area is exceptional.

For instance, transfer mastering is a system that enables developers to finetune an synthetic neural network for a new job without having the have to have for several teaching illustrations. Couple of-shot and a person-shot learning help a equipment finding out product educated on one process to conduct a similar activity with a solitary or quite few new illustrations. For occasion, if you have an graphic classifier properly trained to detect volleyballs and soccer balls, you can use just one-shot studying to increase basketball to the record of courses it can detect.

[Read: A beginner’s guide to the math that powers machine learning]A new method dubbed “less-than-a single-shot learning” (or LO-shot discovering), just lately created by AI experts at the College of Waterloo, will take a person-shot learning to the upcoming stage. The plan behind LO-shot finding out is that to teach a machine understanding design to detect M classes, you require much less than a person sample for each class. The method, released in a paper published in the arXiv preprocessor, is still in its early phases but demonstrates guarantee and can be handy in many scenarios exactly where there is not more than enough data or also a lot of lessons.

The k-NN classifier

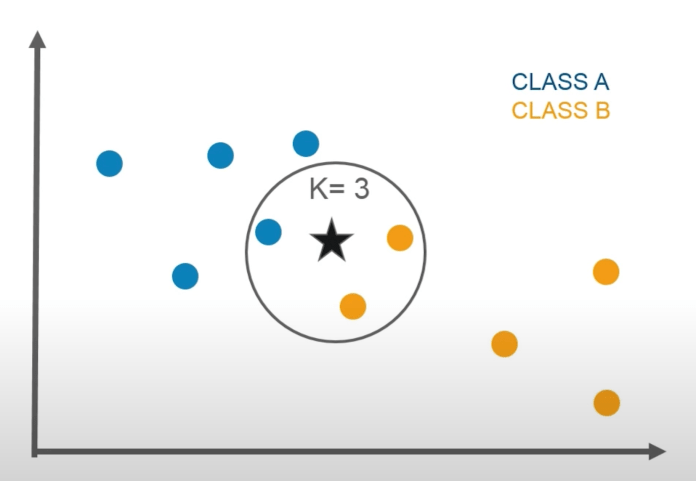

The k-NN device understanding algorithm classifies details by discovering the closest cases.

The k-NN device understanding algorithm classifies details by discovering the closest cases.

The LO-shot mastering strategy proposed by the researchers applies to the “k-nearest neighbors” device learning algorithm. K-NN can be employed for the two classification (determining the class of an enter) or regression (predicting the consequence of an input) tasks. But for the sake of this discussion, we’ll however to classification.

As the identify implies, k-NN classifies enter info by evaluating it to its k closest neighbors (k is an adjustable parameter). Say you want to make a k-NN equipment discovering model that classifies hand-penned digits. First you give it with a established of labeled visuals of digits. Then, when you deliver the model with a new, unlabeled impression, it will figure out its class by wanting at its closest neighbors.

For instance, if you set k to 5, the equipment understanding product will come across the five most comparable digit images for every single new input. If, say 3 of them belong to the class “7,” it will classify the picture as the digit 7.

k-NN is an “instance-based” equipment learning algorithm. As you present it with a lot more labeled examples of each individual class, its precision increases but its general performance degrades, simply because each individual new sample provides new comparisons functions.

In their LO-shot studying paper, the researchers confirmed that you can realize correct effects with k-NN when delivering fewer illustrations than there are courses. “We propose ‘less than one’-shot studying (LO-shot understanding), a location where by a product need to find out N new classes specified only M < N examples, less than one example per class,” the AI researchers write. “At first glance, this appears to be an impossible task, but we both theoretically and empirically demonstrate feasibility.”

Machine learning with less than one example per class

The classic k-NN algorithm provides “hard labels,” which means for every input, it provides exactly one class to which it belongs. Soft labels, on the other hand, provide the probability that an input belongs to each of the output classes (e.g., there’s a 20% chance it’s a “2”, 70% chance it’s a “5,” and a 10% chance it’s a “3”).

In their work, the AI researchers at the University of Waterloo explored whether they could use soft labels to generalize the capabilities of the k-NN algorithm. The proposition of LO-shot learning is that soft label prototypes should allow the machine learning model to classify N classes with less than N labeled instances.

The technique builds on previous work the researchers had done on soft labels and data distillation. “Dataset distillation is a process for producing small synthetic datasets that train models to the same accuracy as training them on the full training set,” Ilia Sucholutsky, co-author of the paper, told TechTalks. “Before soft labels, dataset distillation was able to represent datasets like MNIST using as few as one example per class. I realized that adding soft labels meant I could actually represent MNIST using less than one example per class.”

MNIST is a database of images of handwritten digits often used in training and testing machine learning models. Sucholutsky and his colleague Matthias Schonlau managed to achieve above-90 percent accuracy on MNIST with just five synthetic examples on the convolutional neural network LeNet.

“That consequence actually surprised me, and it is what bought me contemplating far more broadly about this LO-shot learning placing,” Sucholutsky claimed.

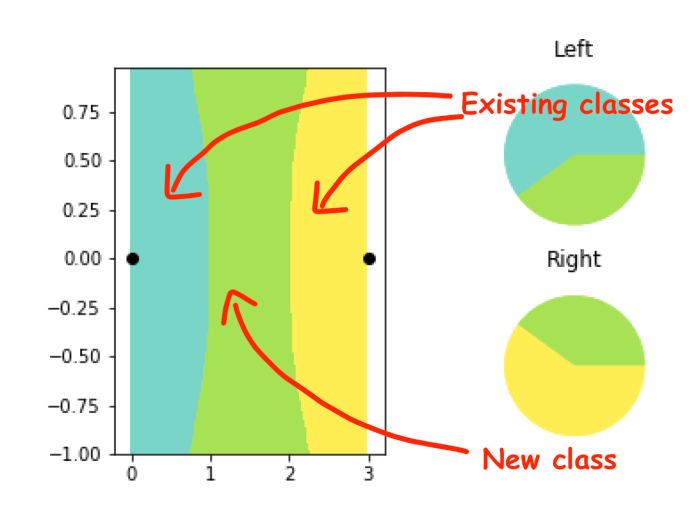

Generally, LO-shot utilizes smooth labels to generate new lessons by partitioning the area involving existing classes.

LO-shot discovering employs gentle labels to partition the area among current courses.

LO-shot discovering employs gentle labels to partition the area among current courses.

In the example over, there are two occasions to tune the machine learning model (shown with black dots). A classic k-NN algorithm would split the place in between the two dots in between the two lessons. But the “soft-label prototype k-NN” (SLaPkNN) algorithm, as the OL-shot understanding design is referred to as, produces a new area among the two courses (the environmentally friendly location), which represents a new label (believe horse with wings). Listed here we have achieved N lessons with N-1 samples.

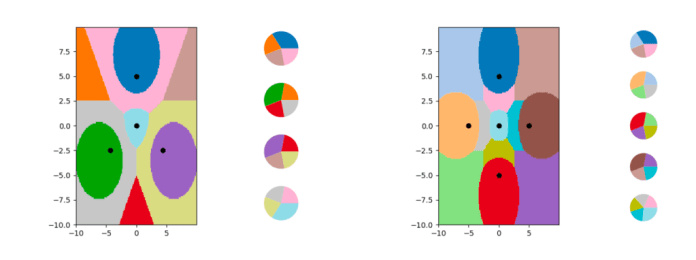

In the paper, the scientists display that LO-shot learning can be scaled up to detect 3N-2 classes using N labels and even outside of.

LO-shot finding out can be extended to attain multiple classes for every occasion. Left: 10 lessons obtained from four circumstances. Ideal: 13 classes acquired from 5 scenarios.

LO-shot finding out can be extended to attain multiple classes for every occasion. Left: 10 lessons obtained from four circumstances. Ideal: 13 classes acquired from 5 scenarios.

In their experiments, Sucholutsky and Schonlau located that with the ideal configurations for the soft labels, LO-shot equipment mastering can provide trustworthy outcomes even when you have noisy info.

“I assume LO-shot studying can be designed to get the job done from other resources of details as well—similar to how lots of zero-shot understanding strategies do—but comfortable labels are the most simple strategy,” Sucholutsky stated, incorporating that there are already many methods that can discover the right soft labels for LO-shot equipment mastering.

Even though the paper displays the electric power of LO-shot learning with the k-NN classifier, Sucholutsky says the technique applies to other equipment understanding algorithms as well. “The analysis in the paper focuses precisely on k-NN just since it is less complicated to evaluate, but it should work for any classification design that can make use of smooth labels,” Sucholutsky claimed. The researchers will before long launch a far more in depth paper that reveals the software of LO-shot studying to deep learning products.

New venues for equipment learning investigation

“For occasion-dependent algorithms like k-NN, the performance enhancement of LO-shot finding out is fairly huge, particularly for datasets with a significant selection of lessons,” Susholutsky stated. “More broadly, LO-shot finding out is valuable in any sort of location wherever a classification algorithm is utilized to a dataset with a significant range of classes, particularly if there are number of, or no, illustrations available for some lessons. Mainly, most configurations the place zero-shot learning or number of-shot finding out are beneficial, LO-shot understanding can also be helpful.”

For instance, a personal computer vision procedure that must identify 1000’s of objects from illustrations or photos and video frames can benefit from this device studying procedure, particularly if there are no illustrations offered for some of the objects. Yet another application would be to tasks that obviously have gentle-label information, like natural language processing units that execute sentiment examination (e.g., a sentence can be each sad and angry concurrently).

In their paper, the researchers describe “less than one”-shot finding out as “a practical new course in machine discovering research.”

“We think that producing a soft-label prototype era algorithm that specifically optimizes prototypes for LO-shot discovering is an significant next action in checking out this area,” they write.

“Soft labels have been explored in quite a few configurations ahead of. What is new below is the intense location in which we check out them,” Susholutsky explained. “I feel it just was not a specifically evident strategy that there is yet another routine hiding among 1-shot and zero-shot learning.”

This report was at first released by Ben Dickson on TechTalks, a publication that examines tendencies in technology, how they have an impact on the way we dwell and do organization, and the issues they fix. But we also examine the evil side of technology, the darker implications of new tech and what we need to glance out for. You can browse the authentic write-up right here.

Ben Dickson

Go through a lot more

Some sections of this report are sourced from:

thenextweb.com