“The age of A.I. has begun,” Nvidia CEO Jensen Huang declared at this year’s GTC. At its GPU Technology Convention this calendar year, Nvidia showcased its innovation to additional A.I., noting how the technology could help fix the world’s difficulties 10 periods better and more rapidly.

When Nvidia is most properly-known for its graphics playing cards — and additional recently connected with real-time ray tracing — the business is also driving the at the rear of-the-scenes innovation that delivers synthetic intelligence into our day by day life, from warehouse robots that pack our shipping and delivery orders, to self-driving automobiles and organic language bots that deliver information, lookup, and details with minimal latency or hold off.

“We enjoy performing on particularly challenging computing difficulties that have wonderful impact on the planet,” Huang explained, noting that the organization now has 110 SDKs that concentrate on the more than 1 billion CUDA-compatible GPUs that have been shipped. The firm claims far more than 6,500 startups are developing applications on Nvidia, joining the 2 million total Nvidia builders. “This is ideal in our wheelhouse. We’re all in to progress and democratize this new kind of computing for the age of A.I. Nvidia is focused to advancing accelerated computing.”

A different apology for the RTX 3080/3090 start

Huang led with an additional quick apology about the tricky start of the Nvidia RTX 3080 and 3090 video clip playing cards. Go through additional in this article.

Nvidia Omniverse is a education ground for robots

For gamers, ray tracing allows to render vivid aspects in scenes in video video games by making use of the property of light-weight. Nvidia is using the exact same rules to construct Nvidia Omniverse, which the organization claimed is “a put in which robots can study how to be robots, just like they would in the actual environment.”

Out there now in open up beta, Nvidia Omniverse is an open platform for collaboration and simulation exactly where robots can study from real looking simulations of the actual environment. Using Omniverse, autonomous motor vehicles can speedily learn to push and interact with scenarios that real human motorists may possibly face, without having the risk of endangering bystanders if the experiment goes sideways. Omniverse also enables for testing on a a great deal wider scale, given that an autonomous car or truck or robot doesn’t have to be bodily deployed to examination it.

To display how Nvidia Omniverse can affect us all, Nvidia highlighted how Omniverse can get the job done in drug discovery, which is an even extra critical place of investigate given the global pandemic. Nevertheless drug discovery generally will take much more than a 10 years to create adug and calls for far more than a fifty percent-billion bucks in study and development funding, 90% of all those endeavours fail, Huang explained. To make issues even worse, each 9 years, the cost of discovering new drugs doubles.

Nvidia’s Omniverse can support researchers detect the proteins that can cause disorder, as effectively as speed up testing of possible remedies by utilizing A.I. and information analytics. All this is utilized to Nvidia’s new Clara Discovery system. And in the U.K., Nvidia launched its new Cambridge Just one info heart, which the firm says is the swiftest in the location and a person of the prime 30 in the planet, with 400 petaflops of A.I. functionality.

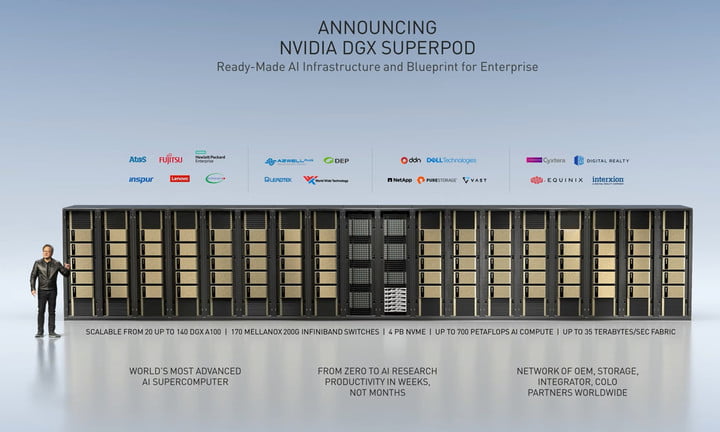

The firm also released its new DGX Tremendous Pod architecture, to allow other scientists to create their own scalable supercomputers that hyperlink between 20 to 140 DGX techniques.

Nvidia RTX A6000: Ray tracing for specialists

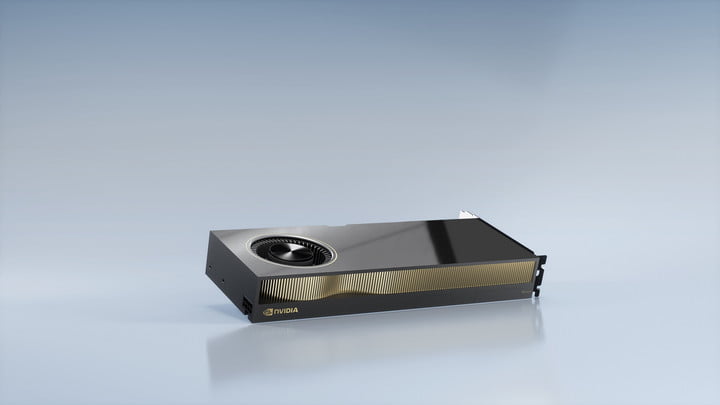

Growing on the a short while ago declared GeForce RTX 3070, RTX 3080, and RTX 3090 graphics cards, Nvidia introduced a new generation of Ampere-based mostly GPUs for industry experts. The new graphics playing cards aren’t branded below Nvidia’s Quadro umbrella, but the RTX A6000 and Nvidia A40 GPUs are specific at the exact innovative and knowledge scientist audiences who purchase the Quadro GPUs.

“The GPUs supply the velocity and performance to help engineers to create ground breaking items, designers to develop point out-of-the-art properties, and experts to discover breakthroughs from any place in the earth,” the enterprise stated in a site put up, noting that the new A6000 and A40 characteristic new RT cores, Tensor cores, and CUDA cores that are “significantly quicker than the previous generations.”

The corporation did not present specific particulars about the components. On the other hand, Nvidia claimed that the 2nd-era RT cores supply 2x the throughput of the prior-generation cards although also offering concurrent ray tracing, shading, and compute capabilities, while the third-generation Tensor cores give up to 5x the throughput of the preceding generation.

The cards ship with 48GB of GPU memory that is expandable to 96GB with NVLink when two GPUs are linked. This compares to just 24GB of memory on the RTX 3090. While the RTX 3090 is marketed as a GPU that is capable of rendering video games in 8K at 60 frames for each next (fps), the expanded memory on the qualified RTX A6000 and A40 can help approach Blackmagic Uncooked 8K and 12K footage for video clip modifying. Like the shopper Ampere cards, the A6000 and A40 GPUs are primarily based on PCIe Gen 4, which delivers two times the bandwidth of the prior technology.

The A40-based mostly servers will be obtainable in systems from Cisco, Dell, Fujitsu, Hewlett Packard Enterprise, and Lenovo. The A6000 GPUs will be coming to channel associates, and both GPUs will be available early next year. Pricing information have been not promptly accessible, and it is unclear if the experienced cards will see the exact same confined source and big shortages that Nvidia knowledgeable with the start of its buyer cards.

The rise of the A.I. bots

Nvidia also highlighted how its perform on GPUs is aiding to speed up A.I. growth and adoption. Facebook’s A.I. scientists have created a chatbot with knowledge and empathy that half of the social network’s users in fact most popular. California Institute of Technology scientists trained a drone working with reinforcement studying to control the flight technique to fly efficiently as a result of turbulence and variations in terrain.

Nvidia’s A.I. is centered on three pillars: One- to multi-GPU nodes on any framework or design, the use of inference, and making use of pretrained products, Huang reported.

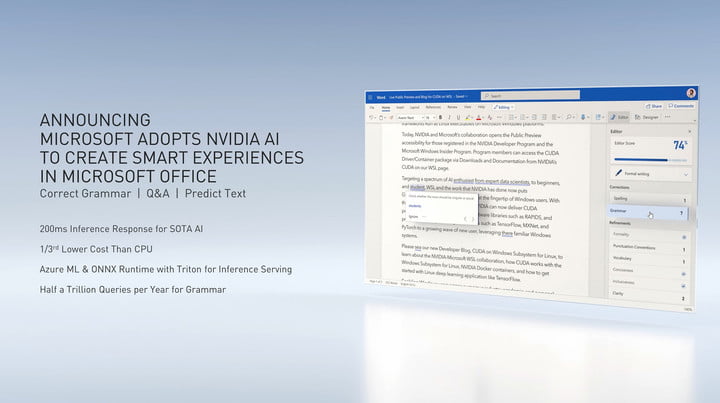

Nvidia also declared that it has partnered with Microsoft to provide Nvidia A.I. to Azure to assist make Business smarter.

“Today, we’re saying that Microsoft is adopting Nvidia A.I. on Azure, to ability good encounters in Microsoft Office environment,” Huang reported through the keynote. “The world’s most well-known efficiency software used by hundreds of thousands and thousands will now be A.I.-assisted. The to start with attributes will involve intelligent grammar correction, Q&A, textual content prediction. Simply because of the quantity of consumers and the quick response necessary for a excellent experience, Office environment will be connected to Nvidia GPUs, and Azure with Nvidia GPUs responses take a lot less than 200 milliseconds. Our throughput lets Microsoft scale to tens of millions of simultaneous customers.”

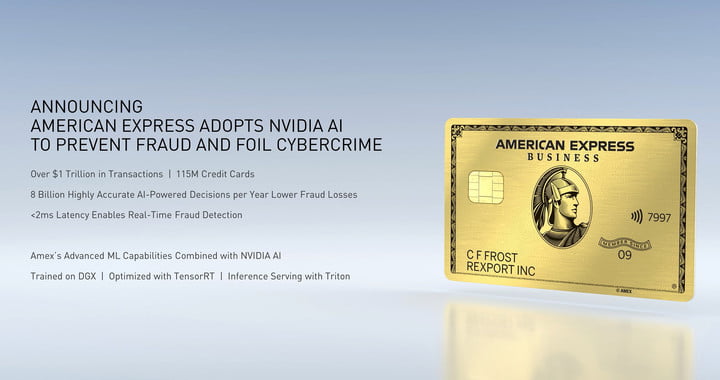

American Categorical is also using A.I. to overcome fraud, while Twitter is leveraging synthetic intelligence to assistance it recognize and contextualize the huge amount of video clips uploaded to the system.

With conversational A.I., success from voice queries executed on Nvidia’s GPU platform have half the latency in comparison to CPU-processed queries and also far more reasonable, human-like sounding textual content-to-speech engines. Nvidia also declared an open beta of Jarvis for developers to try A.I. with conversational techniques.

A.I. for the work-from-home upcoming

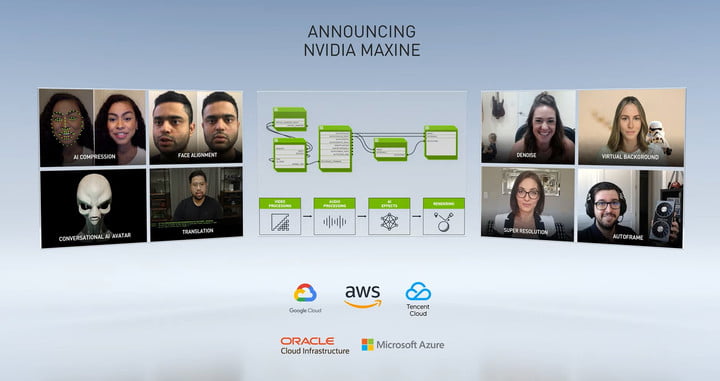

A.I. can also be developed into programs like videoconferencing and chatting remedies that assist workers collaborate remotely. With Nvidia’s Online video Maxene, Huang reported that A.I. can do magic for movie phone calls.

Maxene can determine the important attributes of a encounter, send out only the changes of the options in excess of the internet, and then reanimate the deal with at the receiver. This saves bandwidth, earning for a greater movie practical experience in spots with poor Internet connectivity. Huang claimed that bandwidth is lowered by a factor of 10.

A.I. make calls greater even in regions with high bandwidth, even so. In the most intense example, A.I. can be used to reorient your experience so that you’re creating eye make contact with with each individual human being on a phone, even when your facial area is tilted somewhat absent from the digicam. A.I. can also reduce track record sound, relight your encounter, swap the background, and boost movie good quality in very poor lights. Blended with Jarvis A.I. speech, Maxene can also produce shut caption text.

“We have an prospect to revolutionize videoconferencing of these days, and invent the digital presence of tomorrow,” Huang stated. “And video A.I. inference programs are coming from every field.”

Bringing a facts heart to an ARM chip

Highlighting its financial commitment in ARM chips, Nvidia declared the new BlueField DPUs, which convey the ability of an a data-centre-infrastructure-on-a-chip and are supported by DOCA, which is the architecture.

The new BlueField 2 DPUs offload critical factors — like networking and storage — as effectively as security tasks from the CPU to enable avert cyberattacks.

“A solitary BlueField-2 DPU can provide the exact details center services that could eat up to 125 CPU cores,” Nvidia claimed in a well prepared assertion. “This frees up useful CPU cores to operate a broad variety of other company apps.” The corporation explained that at least 30% of the CPU was earlier consumed by functioning facts centre infrastructure, and these cores are now freed up as the job is now offloaded to the BlueField DPU.

A 2nd Bluefield 2X DPU also comes with Nvidia’s Ampere-primarily based GPU technology. Ampere delivers A.I. to the BlueField 2X to deliver serious-time security analytics and recognize malicious exercise.

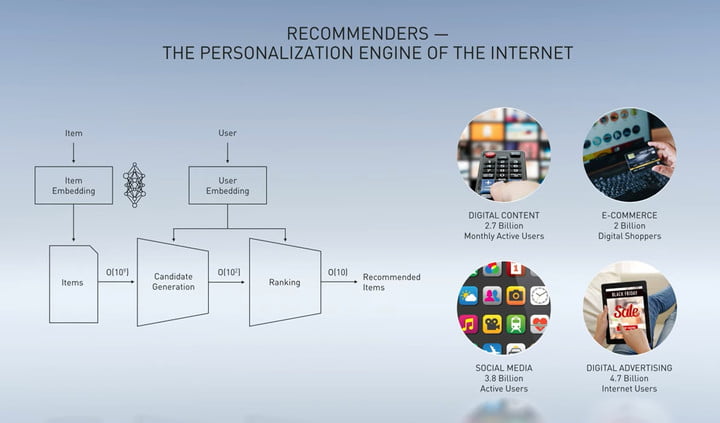

Personalized suggestion engines

A.I. can be used to deliver personalised recommendations of digital and actual physical products on platforms, serving applicable digital advertisements, news, and films. Nvidia claimed that even a 1% advancement in suggestion accuracy can total to billions more in product sales and higher client retention.

To aid corporations improve their recommendation motor, Nvidia launched Merlin, which is driven by the Nvidia Rapids platform. While CPU-based mostly alternatives can take times to discover, Merlin is said to be superfast and super-scalable, with cycle periods likely from a working day to just three hours. Merlin is now in open beta, Huang mentioned.

Rapids is made use of by Adobe for intelligent promoting, when Capital One is making use of the platform for fraud analytics and to energy the company’s Eno chatbot.

A.I. for all the IoT

Nvidia’s EGX system is utilized to deliver A.I. to edge units to make A.I. much more responsive for Internet of Issues, or IoT, programs. EGX is readily available on Nvidia’s NGC, and it is utilised by hospitals like Northwestern Memorial Healthcare facility to offload some responsibilities to pcs that are routinely carried out by nurses. Sufferers, for case in point, can use normal language queries to question a bot what procedure they are possessing.

“The EGX A.I. pc integrates a Mellanox Bluefield 2 GPU and an Ampere GPU into a single PCI Convey card, turning any common OEM server into a safe accelerated A.I. knowledge center,” Huang explained.

The system can be leveraged in well being treatment, producing, logistics, shipping and delivery, retail, and transportation.

Advancing ARM

“Today. we’re saying a major initiative to progress the ARM system,” Huang reported of the company’s announced acquisition of ARM, nothing at all that it is building investments in 3 dimensions.

“First, we’re complementing ARM associates with GPU, networking, storage, and security systems to develop total accelerated platforms. Second, we’re operating with our companions to make platforms for HPC cloud edge NPC. This involves chips programs and program software program. And third, we are porting the Nvidia A.I. and Nvidia RTX engines to ARM.”

Currently, this is only readily available on the x86 platform. Even so, Nvidia’s financial investment in ARm will rework it in the primary edge and accelerate it in A.I. computing, Huang explained, as he appears to situation ARM as a competitor to Intel in the server area.

Editors’ Recommendations

-

Nvidia GeForce RTX 3000 function: Every little thing which is been announced

-

Nvidia GeForce RTX 3080: News, rumors, and all the things we know so far

-

The Nvidia RTX 3090 evaluations are in. Just how strong is this monster GPU?

-

Nvidia RTX 3080 vs. Microsoft Xbox Collection X vs. Sony PlayStation 5

-

Nvidia RTX DLSS: Almost everything you need to know

Some components of this posting are sourced from:

www.digitaltrends.com

Nvidia building UK supercomputer to boost Covid-19 research

Nvidia building UK supercomputer to boost Covid-19 research