Security and IT groups are routinely pressured to undertake program right before thoroughly knowing the security risks. And AI equipment are no exception.

Workforce and business enterprise leaders alike are flocking to generative AI software and comparable packages, usually unaware of the key SaaS security vulnerabilities they are introducing into the enterprise. A February 2023 generative AI study of 1,000 executives unveiled that 49% of respondents use ChatGPT now, and 30% plan to tap into the ubiquitous generative AI resource shortly. Ninety-nine % of those utilizing ChatGPT claimed some form of expense-discounts, and 25% attested to lessening expenditures by $75,000 or additional. As the researchers executed this study a mere a few months immediately after ChatGPT’s basic availability, present day ChatGPT and AI software use is undoubtedly increased.

Security and risk groups are presently overwhelmed shielding their SaaS estate (which has now come to be the running procedure of enterprise) from popular vulnerabilities these kinds of as misconfigurations and over permissioned consumers. This leaves minimal bandwidth to evaluate the AI software threat landscape, unsanctioned AI resources currently in use, and the implications for SaaS security.

With threats emerging outside the house and inside corporations, CISOs and their groups have to fully grasp the most appropriate AI tool challenges to SaaS programs — and how to mitigate them.

1 — Risk Actors Can Exploit Generative AI to Dupe SaaS Authentication Protocols

As bold staff members devise means for AI equipment to help them complete extra with a lot less, so, way too, do cybercriminals. Making use of generative AI with destructive intent is only unavoidable, and it can be currently achievable.

AI’s ability to impersonate humans exceedingly well renders weak SaaS authentication protocols specially vulnerable to hacking. In accordance to Techopedia, danger actors can misuse generative AI for password-guessing, CAPTCHA-cracking, and setting up additional potent malware. Though these solutions might audio limited in their attack selection, the January 2023 CircleCI security breach was attributed to a one engineer’s notebook turning out to be infected with malware.

Also, three famous technology academics recently posed a plausible hypothetical for generative AI operating a phishing attack:

“A hacker works by using ChatGPT to crank out a customized spear-phishing concept primarily based on your company’s advertising elements and phishing messages that have been productive in the past. It succeeds in fooling people today who have been perfectly trained in email consciousness, for the reason that it isn’t going to look like the messages they’ve been properly trained to detect.”

Destructive actors will keep away from the most fortified entry level — commonly the SaaS platform itself — and rather focus on far more vulnerable aspect doorways. They will never bother with the deadbolt and guard pet dog located by the entrance doorway when they can sneak all over back to the unlocked patio doors.

Relying on authentication by itself to keep SaaS info safe is not a feasible solution. Outside of employing multi-factor authentication (MFA) and actual physical security keys, security and risk teams want visibility and continual checking for the overall SaaS perimeter, together with automated alerts for suspicious login action.

These insights are needed not only for cybercriminals’ generative AI activities but also for employees’ AI tool connections to SaaS platforms.

2 — Personnel Link Unsanctioned AI Equipment to SaaS Platforms Without Thinking of the Pitfalls

Personnel are now relying on unsanctioned AI applications to make their work opportunities much easier. Right after all, who desires to work more durable when AI instruments maximize performance and efficiency? Like any variety of shadow IT, worker adoption of AI resources is pushed by the finest intentions.

For illustration, an worker is persuaded they could control their time and to-do’s much better, but the effort to check and assess their activity administration and conferences involvement feels like a large endeavor. AI can execute that checking and evaluation with relieve and give recommendations virtually quickly, providing the staff the efficiency strengthen they crave in a fraction of the time. Signing up for an AI scheduling assistant, from the conclusion-user’s viewpoint, is as straightforward and (seemingly) innocuous as:

- Registering for a free of charge trial or enrolling with a credit rating card

- Agreeing to the AI tool’s Go through/Generate authorization requests

- Connecting the AI scheduling assistant to their company Gmail, Google Drive, and Slack accounts

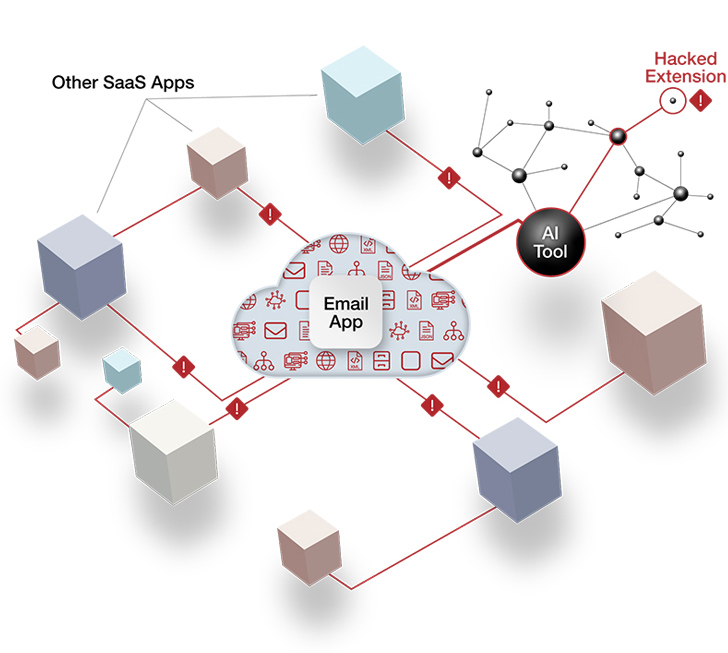

This method, having said that, results in invisible conduits to an organization’s most sensitive information. These AI-to-SaaS connections inherit the user’s permission configurations, permitting the hacker who can productively compromise the AI device to move quietly and laterally throughout the authorized SaaS units. A hacker can entry and exfiltrate data until finally suspicious exercise is recognized and acted on, which can assortment from months to several years.

AI applications, like most SaaS apps, use OAuth entry tokens for ongoing connections to SaaS platforms. After the authorization is finish, the token for the AI scheduling assistant will retain reliable, API-primarily based communicationwith Gmail, Google Travel, and Slack accounts — all devoid of demanding the person to log in or authenticate at any standard intervals. The threat actor who can capitalize on this OAuth token has stumbled on the SaaS equivalent of spare keys “concealed” under the doormat.

Determine 1: An illustration of an AI resource establishing an OAuth token relationship with a major SaaS system. Credit history: AppOmni

Determine 1: An illustration of an AI resource establishing an OAuth token relationship with a major SaaS system. Credit history: AppOmni

Security and risk teams normally lack the SaaS security tooling to keep track of or handle this sort of an attack area risk. Legacy tools like cloud entry security brokers (CASBs) and protected web gateways (SWGs) will not detect or warn on AI-to-SaaS connectivity.

Still these AI-to-SaaS connections usually are not the only signifies by which employees can unintentionally expose sensitive facts to the outdoors globe.

3 — Delicate Facts Shared with Generative AI Resources Is Susceptible to Leaks

The facts staff members submit to generative AI equipment — frequently with the objective of expediting function and increasing its quality — can stop up in the arms of the AI company alone, an organization’s competition, or the general general public.

Since most generative AI applications are free of charge and exist exterior the organization’s tech stack, security and risk professionals have no oversight or security controls for these equipment. This is a expanding issue amongst enterprises, and generative AI data leaks have now transpired.

A March incident inadvertently enabled ChatGPT buyers to see other users’ chat titles and histories in the website’s sidebar. Problem arose not just for delicate organizational data leaks but also for person identities being uncovered and compromised. OpenAI, the builders of ChatGPT, announced the potential for buyers to convert off chat background. In concept, this choice stops ChatGPT from sending knowledge again to OpenAI for item improvement, but it requires workforce to take care of data retention configurations. Even with this environment enabled, OpenAI retains conversations for 30 times and physical exercises the appropriate to overview them “for abuse” prior to their expiration.

This bug and the facts retention wonderful print haven’t long gone unnoticed. In Might, Apple restricted employees from utilizing ChatGPT over considerations of private information leaks. Although the tech big took this stance as it builds its own generative AI tools, it joined enterprises these types of as Amazon, Verizon, and JPMorgan Chase in the ban. Apple also directed its developers to steer clear of GitHub Co-pilot, owned by top rated competitor Microsoft, for automating code.

Typical generative AI use circumstances are replete with information leak threats. Take into consideration a merchandise manager who prompts ChatGPT to make the information in a products roadmap doc extra powerful. That item roadmap practically certainly contains merchandise data and plans in no way meant for community consumption, permit by itself a competitor’s prying eyes. A related ChatGPT bug — which an organization’s IT staff has no ability to escalate or remediate — could outcome in critical knowledge publicity.

Stand-alone generative AI does not build SaaS security risk. But what is actually isolated today is related tomorrow. Ambitious employees will in a natural way search for to prolong the usefulness of unsanctioned generative AI resources by integrating them into SaaS applications. Presently, ChatGPT’s Slack integration requires far more get the job done than the common Slack link, but it really is not an exceedingly higher bar for a savvy, motivated employee. The integration makes use of OAuth tokens particularly like the AI scheduling assistant example explained previously mentioned, exposing an group to the very same pitfalls.

How Businesses Can Safeguard Their SaaS Environments from Sizeable AI Device Dangers

Organizations require guardrails in position for AI software info governance, exclusively for their SaaS environments. This requires thorough SaaS security tooling and proactive cross-practical diplomacy.

Staff use unsanctioned AI tools mainly owing to restrictions of the authorised tech stack. The desire to raise productivity and boost excellent is a virtue, not a vice. You can find an unmet need, and CISOs and their teams should technique staff members with an mind-set of collaboration versus condemnation.

Excellent-faith discussions with leaders and close-consumers with regards to their AI software requests are critical to building rely on and goodwill. At the identical time, CISOs must express reputable security fears and the likely ramifications of risky AI behavior. Security leaders must take into account by themselves the accountants who explain the ideal techniques to do the job in just the tax code rather than the IRS auditors perceived as enforcers unconcerned with something past compliance. Irrespective of whether it is really putting suitable security options in put for the wished-for AI instruments or sourcing feasible choices, the most profitable CISOs try to enable staff members maximize their productivity.

Thoroughly knowledge and addressing the risks of AI instruments needs a extensive and robust SaaS security posture management (SSPM) answer. SSPM delivers security and risk practitioners the insights and visibility they have to have to navigate the ever-modifying state of SaaS risk.

To increase authentication energy, security teams can use SSPM to implement MFA all over all SaaS apps in the estate and monitor for configuration drift. SSPM enables security groups and SaaS application house owners to enforce most effective practices with out finding out the intricacies of each and every SaaS app and AI software location.

The capability to stock unsanctioned and approved AI equipment related to the SaaS ecosystem will expose the most urgent hazards to investigate. Ongoing monitoring quickly alerts security and risk teams when new AI connections are established. This visibility plays a considerable position in minimizing the attack surface and getting motion when an unsanctioned, unsecure, and/or above permissioned AI instrument surfaces in the SaaS ecosystem.

AI tool reliance will pretty much unquestionably keep on to unfold promptly. Outright bans are hardly ever foolproof. In its place, a pragmatic mix of security leaders sharing their peers’ target to boost productiveness and minimize repetitive tasks coupled with the ideal SSPM answer is the finest solution to drastically cutting down SaaS data exposure or breach risk.

Observed this article interesting? Comply with us on Twitter and LinkedIn to examine much more distinctive content material we put up.

Some parts of this article are sourced from:

thehackernews.com

Microsoft Warns of Widescale Credential Stealing Attacks by Russian Hackers

Microsoft Warns of Widescale Credential Stealing Attacks by Russian Hackers