Cybersecurity scientists have identified a critical security flaw in an synthetic intelligence (AI)-as-a-assistance provider Replicate that could have permitted risk actors to get accessibility to proprietary AI models and delicate info.

“Exploitation of this vulnerability would have authorized unauthorized obtain to the AI prompts and success of all Replicate’s platform clients,” cloud security firm Wiz stated in a report revealed this week.

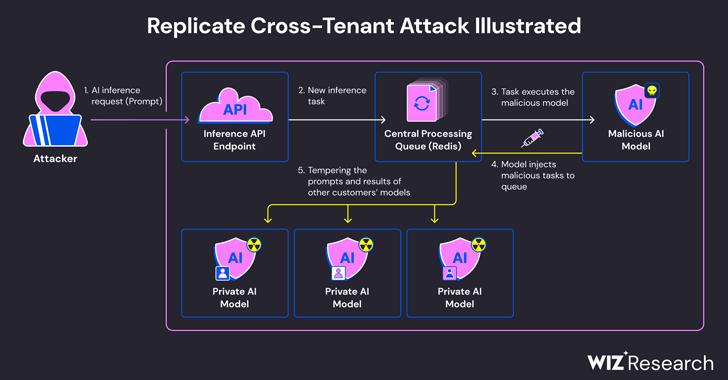

The issue stems from the simple fact that AI designs are commonly packaged in formats that allow for arbitrary code execution, which an attacker could weaponize to carry out cross-tenant attacks by usually means of a destructive design.

Replicate makes use of an open-resource device known as Cog to containerize and bundle equipment mastering versions that could then be deployed either in a self-hosted natural environment or to Replicate.

Wiz reported that it established a rogue Cog container and uploaded it to Replicate, in the long run employing it to realize distant code execution on the service’s infrastructure with elevated privileges.

“We suspect this code-execution technique is a pattern, in which corporations and organizations run AI products from untrusted sources, even although these versions are code that could potentially be malicious,” security researchers Shir Tamari and Sagi Tzadik mentioned.

The attack approach devised by the firm then leveraged an already-proven TCP connection connected with a Redis server instance inside the Kubernetes cluster hosted on the Google Cloud Platform to inject arbitrary instructions.

What is a lot more, with the centralized Redis server remaining applied as a queue to manage various client requests and their responses, it could be abused to aid cross-tenant assaults by tampering with the course of action in purchase to insert rogue tasks that could effects the benefits of other customers’ types.

These rogue manipulations not only threaten the integrity of the AI models, but also pose considerable dangers to the precision and dependability of AI-pushed outputs.

“An attacker could have queried the personal AI designs of consumers, possibly exposing proprietary knowledge or sensitive knowledge involved in the design training method,” the scientists said. “Also, intercepting prompts could have exposed delicate information, like individually identifiable information (PII).

The shortcoming, which was responsibly disclosed in January 2024, has considering the fact that been addressed by Replicate. There is no evidence that the vulnerability was exploited in the wild to compromise consumer details.

The disclosure arrives a very little above a month right after Wiz detailed now-patched risks in platforms like Hugging Encounter that could make it possible for risk actors to escalate privileges, acquire cross-tenant entry to other customers’ types, and even acquire about the continual integration and constant deployment (CI/CD) pipelines.

“Destructive types depict a major risk to AI devices, specially for AI-as-a-assistance suppliers mainly because attackers might leverage these types to execute cross-tenant assaults,” the researchers concluded.

“The possible impact is devastating, as attackers could be able to entry the hundreds of thousands of private AI designs and apps saved inside AI-as-a-services companies.”

Observed this write-up exciting? Abide by us on Twitter and LinkedIn to study more exclusive content we put up.

Some parts of this article are sourced from:

thehackernews.com

Hackers Created Rogue VMs to Evade Detection in Recent MITRE Cyber Attack

Hackers Created Rogue VMs to Evade Detection in Recent MITRE Cyber Attack