One particular of the factors that caught my eye at Nvidia’s flagship celebration, the GPU Technology Convention (GTC), was Maxine, a system that leverages synthetic intelligence to boost the quality and encounter of online video-conferencing applications in real-time.

Maxine employed deep understanding for resolution improvement, qualifications sounds reduction, video clip compression, facial area alignment, and serious-time translation and transcription.

In this submit, which marks the 1st set up of our “deconstructing artificial intelligence” collection, we will acquire a glimpse at how some of these characteristics get the job done and how they tie-in with AI investigation carried out at Nvidia. We’ll also examine the pending issues and the attainable organization product for Nvidia’s AI-powered video-conferencing system.

Tremendous-resolution with neural networks

The 1st attribute demonstrated in the Maxine presentation is “super resolution,” which in accordance to Nvidia, “can transform decreased resolutions to better resolution movies in genuine time.” Tremendous resolution permits video clip-meeting callers to mail lo-res video clip streams and have them upscaled at the server. This lowers the bandwidth prerequisite of online video conference applications and can make their functionality more steady in locations in which network connectivity is not really stable.

[Read: What audience intelligence data tells us about the 2020 US presidential election]The significant challenge of upscaling visible knowledge is filling in the lacking facts. You have a restricted array of pixels that depict an impression, and you want to extend it to a bigger canvas that is made up of a lot of a lot more pixels. How do you make your mind up what colour values all those new pixels get?

Outdated upscaling strategies use distinct interpolation strategies (bicubic, lanczos, etc.) to fill the area in between pixels. These approaches are far too basic and might supply blended success in diverse forms of visuals and backgrounds.

A person of the added benefits of equipment discovering algorithms is that they can be tuned to complete quite particular responsibilities. For instance, a deep neural network can be experienced on scaled-down movie frames grabbed from video clip convention streams and their corresponding hello-res initial visuals. With ample examples, the neural network will tune its parameters to the typical functions located in movie-meeting visual info (mainly faces) and will be equipped to give a superior low- to hi-res conversion than normal-intent upscaling algorithms. In typical, the a lot more slim the domain, the much better the probabilities of the neural network to converging on a pretty higher accuracy general performance.

There’s now a stable body of investigation on working with synthetic neural networks for upscaling visible facts, which include a 2017 Nvidia paper that discusses standard super resolution with deep neural networks. With movie-conferencing staying a very specialized situation, a properly-properly trained neural network is bound to carry out even superior than much more typical duties. Apart from online video conferencing, there are applications for this technology in other spots, these types of as the movie market, which makes use of deep finding out to remaster old films to greater top quality.

Online video compression with neural networks

A single of the a lot more fascinating parts of the Maxine presentation was the AI movie compression aspect. The online video posted on Nvidia’s YouTube reveals that working with neural networks to compress movie streams lowers bandwidth from ~97 KB/body to ~.12 KB/frame, which is a bit exaggerated, as people have pointed out on Reddit. Nvidia’s website states builders can minimize bandwidth use down to “one-tenth of the bandwidth essential for the H.264 online video compression conventional,” which is a significantly extra reasonable—and nonetheless impressive—figure.

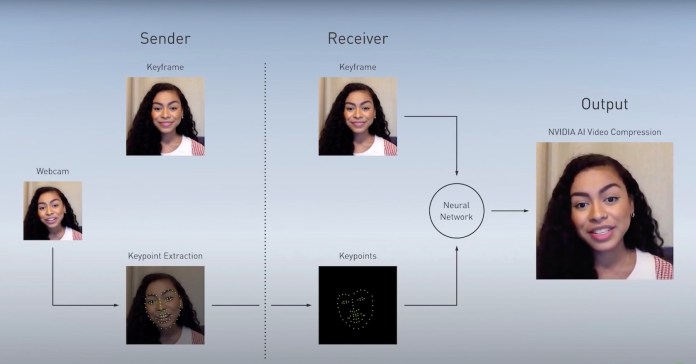

How does Nvidia’s AI achieve these kinds of spectacular compression fees? A blog site publish on Nvidia’s site gives much more detail on how the technology is effective. A neural network extracts and encodes the locations of vital facial capabilities of the consumer for just about every body, which is a great deal a lot more economical than compressing pixel and shade facts. The encoded information is then handed on to a generative adversarial network alongside with a reference online video body captured at the beginning of the session. The GAN is educated to reconstruct the new impression by projecting the facial characteristics on to the reference body.

Deep neural networks extract and encode essential facial options. Generative adversarial networks than venture individuals encodings on a reference frame with the user’s deal with

Deep neural networks extract and encode essential facial options. Generative adversarial networks than venture individuals encodings on a reference frame with the user’s deal with

The work builds up on earlier GAN exploration finished at Nvidia, which mapped tough sketches to prosperous, in depth images and drawings.

The AI video clip compression reveals the moment yet again how slender domains supply excellent settings for the use of deep finding out algorithms.

Deal with realignment with deep studying

The confront alignment aspect readjusts the angle of users’ faces to make it look as if they are wanting instantly at the digicam. This is a problem that is incredibly widespread in video conferencing because people are inclined to seem at the faces of other folks on the display screen relatively than gaze at the digicam.

Though there is not substantially depth about how this works, the weblog publish mentions that they use GANs. It is not challenging to see how this aspect can be bundled with the AI compression/decompression technology. Nvidia has by now accomplished in depth study on landmark detection and encoding, which include the extraction of facial characteristics and gaze way at various angles. The encodings can be fed to the exact same GAN that projects the facial functions onto the reference impression and enable it do the relaxation.

The place does Maxine run its deep learning models?

There are a whole lot of other neat attributes in Maxine, including the integration with JARVIS, Nvidia’s conversational AI platform. Acquiring into all of that would be further than the scope of this report.

But some technological issues keep on being to be settled. For occasion, one particular issue is how much of Maxine’s functionalities will run on cloud servers and how much of it on consumer gadgets. In response to a question from TechTalks, a spokesperson for Nvidia stated, “NVIDIA Maxine is developed to execute the AI features in the cloud so that each and every person obtain them, no matter of the product they are applying.”

This would make perception for some of the characteristics this sort of as super resolution, virtual history, auto-body, and sounds reduction. But it seems pointless for others. Choose, for illustration, the AI video compression case in point. Preferably, the neural network executing the facial expression encoding have to operate on the sender’s system, and the GAN that reconstruct the online video frame must run on the receiver’s machine. If all these features are becoming carried out on servers, there would be no bandwidth financial savings, due to the fact users would send and obtain whole frames as a substitute of the a great deal lighter facial expression encodings.

Preferably, there really should be some type of configuration that makes it possible for people to opt for the appropriate balance concerning area and on-cloud AI inference to strike the correct harmony among network and compute availabilities. For instance, a person who has a workstation with a robust GPU card might want to run all deep understanding types on their pc in trade for reduce bandwidth utilization or expense discounts. On the other hand, a user becoming a member of a meeting from a mobile gadget with lower processing electric power would forgo the local AI compression and defer virtual track record and sound reduction to the Maxine server.

What is Maxine’s enterprise design?

With the covid-19 pandemic pushing businesses to put into action distant-doing work protocols, it looks as very good a time as any to market movie-conferencing applications. And with AI nonetheless getting in the climax of its hype period, businesses have a inclination to rebrand their goods as “AI-powered” to enhance revenue. So, I’m commonly a bit skeptical about something that has “video conferencing” and “AI” in its name these days, and I believe a lot of of them will not stay up to the guarantee.

But I have a several causes to believe Nvidia’s Maxine will succeed where by many others are unsuccessful. First, Nvidia has a keep track of file of performing reputable deep mastering investigate, primarily in laptop vision and more lately in all-natural language processing. The enterprise also has the infrastructure and economical indicates to keep on to build and enhance its AI models and make them out there to its consumers. Nvidia’s GPU servers and its partnerships with cloud companies will allow it to scale as its consumer base grows. And its modern acquisition of cell chipmaker ARM will set it in a acceptable situation to go some of these AI capabilities to the edge (maybe a Maxine-driven video clip-conferencing camera in the potential?).

At last, Maxine is an best example of slender AI being put to superior use. As opposed to laptop or computer eyesight applications that attempt to deal with a broad assortment of issues, all of Maxine’s characteristics are personalized for a distinctive setting: a particular person conversing to a digicam. As many experiments have shown, even the most advanced deep mastering algorithms get rid of their accuracy and balance as their problem area expands. Reciprocally, neural networks are additional probable to capture the true data distribution as its issue domain results in being narrower.

But as we’ve viewed on these webpages prior to, there is a huge variance involving an exciting piece of technology that performs and a single that has a prosperous small business product.

Maxine is at this time in early entry manner, so a whole lot of issues might transform in the potential. For the moment, Nvidia plans to make it obtainable as an SDK and a set of APIs hosed on Nvidia’s servers that developers can combine into their video clip-conferencing purposes. Company video clip conferencing presently has two big gamers, Teams and Zoom. Teams presently has a good deal of AI-run options and it wouldn’t be really hard for Microsoft to incorporate some of the functionalities Maxine presents.

What will be the last pricing design for Maxine? Will the gains delivered by the bandwidth price savings be enough to justify individuals expenditures? Will there be incentives for huge players this sort of as Zoom and Microsoft groups to spouse with Nvidia, or will they insert their individual versions of the exact attributes? Will Nvidia continue with the SDK/API design or create its individual standalone video-conferencing platform? Nvidia will have to solution these and several other thoughts as builders discover its new AI-powered online video-conferencing platform.

This report was initially revealed by Ben Dickson on TechTalks, a publication that examines tendencies in technology, how they influence the way we live and do company, and the complications they remedy. But we also focus on the evil facet of technology, the darker implications of new tech and what we want to search out for. You can go through the first report listed here.

Ben Dickson

Read through more

Some parts of this article are sourced from:

thenextweb.com

Foldable Samsung W21 5G to arrive on November 4

Foldable Samsung W21 5G to arrive on November 4