In the limited time due to the fact their inception, ChatGPT and other generative AI platforms have rightfully received the reputation of final efficiency boosters. Even so, the quite exact same technology that allows fast production of high-good quality text on need, can at the same time expose delicate company info. A modern incident, in which Samsung software package engineers pasted proprietary code into ChatGPT, evidently demonstrates that this instrument can easily turn out to be a opportunity data leakage channel. This vulnerability introduces a demanding problem for security stakeholders, because none of the present facts security applications can guarantee no sensitive information is exposed to ChatGPT. In this posting we’ll investigate this security obstacle in depth and demonstrate how browser security solutions can present a remedy. All whilst enabling organizations to completely notice ChatGPT’s productiveness possible and devoid of getting to compromise on information security.

The ChatGPT information protection blind location: How can you govern text insertion in the browser?

Anytime an worker pastes or kinds text into ChatGPT, the text is no lengthier managed by the corporate’s info security instruments and insurance policies. It will not make a difference if the text was copied from a common facts file, an on the web doc, or a further source. That, in fact, is the difficulty. Data Leak Prevention (DLP) alternatives – from on-prem brokers to CASB – are all file-oriented. They apply guidelines on information primarily based on their articles, though blocking steps this kind of as modifying, downloading, sharing, and more. Nevertheless, this ability is of very little use for ChatGPT data security. There are no documents associated in ChatGPT. Alternatively, use includes pasting copied text snippets or typing instantly into a web web page, which is further than the governance and command of any current DLP products.

How browser security methods prevent insecure facts usage in ChatGPT

LayerX released its browser security system for ongoing checking, risk evaluation, and true-time safety of browser classes. Delivered as a browser extension, LayerX has granular visibility into each individual celebration that normally takes location in just the session. This allows LayerX to detect risky habits and configure guidelines to reduce pre-outlined actions from getting position.

In the context of preserving delicate knowledge from becoming uploaded to ChatGPT, LayerX leverages this visibility to solitary out tried textual content insertion situations, such as ‘paste’ and ‘type’, in the ChatGPT tab. If the text’s material in the ‘paste’ function violates the company facts protection policies, LayerX will stop the motion completely.

To enable this capacity, security teams utilizing LayerX should really define the phrases or normal expressions they want to safeguard from publicity. Then, they need to create a LayerX plan that is induced whenever there’s a match with these strings.

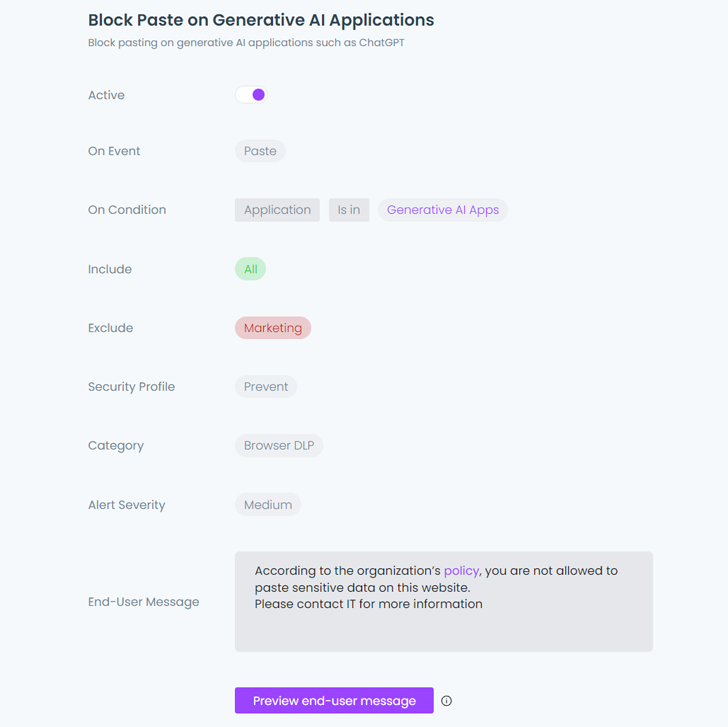

See what it seems to be like in motion:

Setting the plan in the LayerX Dashboard

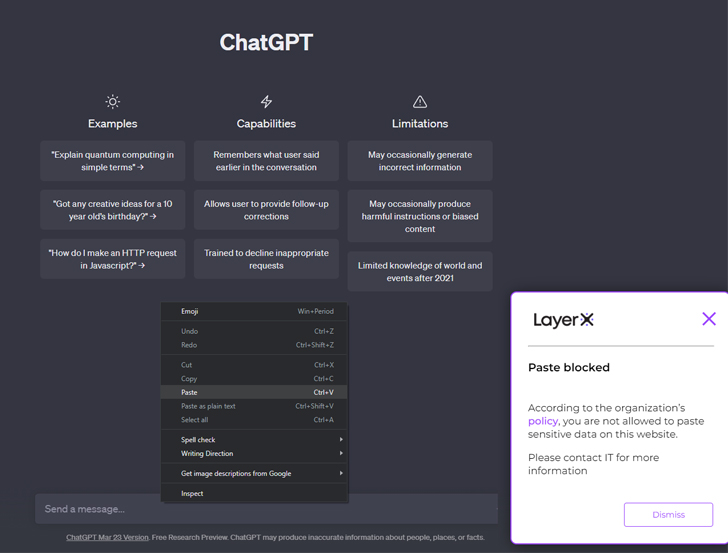

Setting the plan in the LayerX Dashboard  A consumer that tries to duplicate sensitive information into ChatGPT receives blocked by LayerX

A consumer that tries to duplicate sensitive information into ChatGPT receives blocked by LayerX

In addition, organizations that want to stop their workers from using ChatGPT entirely, can use LayerX to block entry to the ChatGPT web-site or to any other on the web AI-centered textual content generators, together with ChatGPT-like browser extensions.

Learn much more about LayerX ChatGPT information defense below.

Applying LayerX’s browser security system to achieve comprehensive SaaS protection

The variance that would make LayerX the only remedy that can properly handle the ChatGPT knowledge security gap is its placement in the browser alone, with real-time visibility and policy enforcement on the precise browser session. This method also makes it an excellent resolution for safeguarding from any cyber threat that targets information or consumer activity in the browser, as is the circumstance with SaaS apps.

People interact with SaaS applications by their browsers. This would make it effortless for LayerX to defend the two the knowledge inside these apps as well as the applications themselves. This is obtained by imposing the subsequent sorts of policies on users’ actions all through the web classes:

Info defense guidelines: On top rated of standard file-oriented protection (avoidance of duplicate/share/obtain/and many others.), LayerX supplies the very same granular defense it does for ChatGPT. In actuality, when the group has outlined which inputs it bans pasting, the very same policies can be expanded to avert exposing this information to any web or SaaS place.

Account compromise mitigation: LayerX screens each user’s routines on the organization’s SaaS applications. The system will detect any anomalous actions or facts conversation that indicates that the user’s account is compromised. LayerX policies will then result in either the termination of the session or disable any facts conversation qualities for the person in the app.

Find out far more about LayerX ChatGPT info protection in this article.

Identified this report exciting? Observe us on Twitter and LinkedIn to browse a lot more exceptional content we post.

Some parts of this article are sourced from:

thehackernews.com

#CYBERUK23: Russian Cyber Offensive Exhibits ‘Unprecedented’ Speed and Agility

#CYBERUK23: Russian Cyber Offensive Exhibits ‘Unprecedented’ Speed and Agility